Broken Object-Level Authorization Still Breaks Modern APIs: How Engineering Teams Actually Fix BOLA

Teams have gotten much better at login security. They deploy SSO, MFA, modern identity providers, and short-lived tokens. But the BOLA API vulnerability still shows up in mature stacks because authentication is only the front door. BOLA happens after the session is already valid, when the application fails to prove whether this specific user, service, or workflow should be allowed to touch this specific object.

That is why broken object level authorization remains one of the most expensive API failures to miss. A signed token can confirm identity. It does not confirm that user_123 may read invoice_9001, export project_77, or mutate order_55. If your service trusts object IDs without re-checking ownership, tenancy, or role-scoped permissions, an attacker does not need to break login. They only need to ask for someone else’s object.

Why the BOLA API vulnerability survives modern stacks

The root problem is simple: most teams centralize authentication earlier than they centralize authorization.

A typical modern stack looks clean on paper:

- the gateway validates the token

- middleware parses claims

- downstream services trust the request

- object IDs flow between services

- background jobs act on messages

- caches speed up permission checks

That architecture can still be wide open to broken object level authorization if the service that actually reads or mutates the object never proves the caller is entitled to it.

Here is the engineering reality:

1) Token validation gets mistaken for authorization

A valid JWT proves the token is real. It does not prove the token holder may access object A instead of object B.

2) Microservices normalize “pass the ID, fetch the row”

Fast-moving teams often build handlers around object lookups by primary key. If the query is where id = :id, with no tenant or ownership constraint, you have a BOLA problem waiting to happen.

3) Gateways are good for coarse checks, not per-object decisions

API gateways are useful for authn, rate limits, IP controls, and broad route rules. They usually do not know enough about the target record, tenant, project membership, approval chain, or delegated permissions to make the final access decision correctly.

4) Internal traffic gets over-trusted

Many teams assume east-west traffic is safe because it is “inside the mesh.” That mindset creates dangerous shortcuts in admin APIs, worker callbacks, webhook consumers, and service-to-service endpoints.

5) Async systems lose actor context

An event says “export invoice 123.” The worker sees a valid job, but the original actor, tenant, scope, approval state, and policy context are missing or stale. The job succeeds when it should not.

6) Session maturity lags behind login maturity

This is where the session layer matters. Even with strong identity controls, authorization decisions fall apart when services use old scopes, stale entitlements, weak context propagation, or oversized service credentials.

What broken object level authorization looks like in real code

Here is the pattern that keeps causing trouble.

Vulnerable example

app.get("/v1/invoices/:invoiceId", requireAuth, async (req, res) => {

const invoice = await db.invoice.findUnique({

where: { id: req.params.invoiceId }

});

if (!invoice) return res.status(404).json({ error: "not_found" });

return res.json(invoice);

});This endpoint verifies that the user is authenticated, but it never checks whether the user belongs to the same tenant, owns the invoice, or has a role that permits access.

An attacker only needs a valid session and a guessed, leaked, or sequential object ID.

Safer example

app.get("/v1/invoices/:invoiceId", requireAuth, async (req, res) => {

const invoice = await db.invoice.findFirst({

where: {

id: req.params.invoiceId,

tenant_id: req.user.tenant_id,

OR: [

{ owner_user_id: req.user.id },

{ shared_with: { some: { user_id: req.user.id, can_read: true } } },

{ billing_admin_tenant_id: req.user.tenant_id }

]

}

});

if (!invoice) return res.status(404).json({ error: "not_found" });

return res.json(invoice);

});This is better because the access rule is attached to the object lookup itself, not bolted on afterward.

That small change matters. The safer your query shape, the fewer chances engineers have to “forget the second check.”

Common microservice failure patterns behind broken object level authorization

If you want to reduce BOLA exposure fast, hunt for these patterns first.

1) Gateway-authenticated, service-authorized-by-assumption

The gateway validates the token and injects identity headers. The downstream service trusts the headers and retrieves data without validating target ownership.

Typical smell:

const userId = req.headers["x-user-id"];

const order = await orderRepo.getById(req.params.orderId);

return res.json(order);This service knows who is calling, but it never asks whether that user may access this order.

2) Tenant checks at the route layer, not the data layer

Many systems compare req.user.tenant_id with req.params.tenantId and call it good. That is not enough. Attackers can often reach objects whose real tenant binding does not match the route parameter.

Better pattern: always constrain object retrieval at the query or policy layer using the authoritative tenant on the record itself.

3) Shared services returning sensitive objects by bare ID

Document, billing, messaging, support, and analytics services often evolve into “lookup engines.” When those services return data for any valid ID without caller-specific policy enforcement, they become perfect BOLA amplifiers.

4) Background workers acting on untrusted or incomplete events

A producer says “approve refund for transaction X.” The worker trusts the message because it came from an internal queue. But the message lacks strong actor context or policy proof, so the worker performs a privileged action without re-validating entitlement.

5) Admin, support, or debug endpoints with weak boundaries

Support tooling is a classic BOLA hotspot. Teams create fast paths for “view as user,” “replay job,” “download export,” or “inspect object.” Those paths often have broad privileges, inconsistent audit logging, and weak object scoping.

6) Opaque IDs treated like access control

UUIDs, ULIDs, snowflakes, and random tokens help with enumeration resistance. They do not solve authorization. They only make guessing harder. If a leaked link, browser history, log line, or copied payload contains a valid ID, the access control decision still has to be correct.

Ownership validation patterns that actually work

This is where most engineering teams get real wins.

Pattern 1: Make the secure query the default query

Avoid repository methods that fetch by raw primary key alone.

Bad:

select * from invoices where id = $1;Better:

select *

from invoices

where id = $1

and tenant_id = $2

and (

owner_user_id = $3

or exists (

select 1

from invoice_permissions p

where p.invoice_id = invoices.id

and p.user_id = $3

and p.can_read = true

)

);When the data-access layer makes the correct scoping easy, developers stop reinventing authz per endpoint.

Pattern 2: Never trust client-supplied ownership or tenant fields

If a request body contains owner_id, account_id, tenant_id, or organization_id, treat those as input to validate, not facts to trust.

Bad:

{

"tenant_id": "tenant_b",

"document_id": "doc_77"

}Good rule: derive tenant and actor context from the authenticated session and authoritative server-side relationships, not from client-controlled fields.

Pattern 3: Re-check authorization at the point of action

A request may pass one check, then trigger multiple downstream actions:

- read object

- export object

- attach note

- notify webhook

- publish event

- generate report

Every sensitive action should validate the relevant permission for that action. “Can view” is not “can export.” “Can update status” is not “can reassign owner.”

Pattern 4: Use row-level security as defense-in-depth, not as an excuse

For Postgres-backed systems, row-level security can reduce blast radius when application code is imperfect.

alter table invoices enable row level security;

create policy tenant_invoice_read

on invoices

for select

using (

tenant_id = current_setting('app.tenant_id')::uuid

);

create policy tenant_invoice_write

on invoices

for update

using (

tenant_id = current_setting('app.tenant_id')::uuid

);This is powerful, but it does not replace application policy. It complements it. You still need action-level authorization, approval logic, and correct service context.

Pattern 5: Return not found for unauthorized object lookups where appropriate

For object access endpoints, 404 can be safer than 403 because it reduces object enumeration signal. Use it deliberately, and make sure your audit trail still records the denied attempt.

Policy centralization patterns for microservices authorization

Most BOLA fixes fail long term because they live as scattered if statements.

What scales is policy centralization.

Option 1: A shared authorization library

This is the fastest place to start. Create one reusable authorization package with clear verbs and resources.

Example:

await authz.assertCan({

actor: req.user,

action: "invoice.read",

resource: {

type: "invoice",

id: invoice.id,

tenant_id: invoice.tenant_id,

owner_user_id: invoice.owner_user_id

}

});That is already much better than duplicating access logic in 40 handlers.

Option 2: Policy-as-code with an external decision engine

For larger environments, centralizing rules in OPA or a similar engine reduces drift.

Example Rego policy:

package authz

default allow := false

allow if {

input.action == "invoice.read"

input.actor.tenant_id == input.resource.tenant_id

input.actor.user_id == input.resource.owner_user_id

}

allow if {

input.action == "invoice.read"

input.actor.tenant_id == input.resource.tenant_id

"billing.admin" in input.actor.roles

}

allow if {

input.action == "invoice.export"

input.actor.tenant_id == input.resource.tenant_id

"billing.export" in input.actor.roles

input.resource.status != "legal_hold"

}Service-side decision input:

{

"actor": {

"user_id": "u_123",

"tenant_id": "t_77",

"roles": ["billing.user"]

},

"action": "invoice.read",

"resource": {

"id": "inv_9001",

"tenant_id": "t_77",

"owner_user_id": "u_123",

"status": "open"

}

}This approach fits well with Cyber Rely’s own engineering-oriented content around API CI gates and policy enforcement in pipelines.

Option 3: Relationship-based access control for complex sharing models

If your product has nested workspaces, delegated access, project sharing, support impersonation, partner portals, or cross-org collaboration, plain RBAC becomes brittle.

In those cases, model the relationship directly:

- user is member of workspace

- workspace owns project

- project contains document

- support agent may access case only with approved elevation

- export requires both role and relationship

That is often the point where “simple middleware” stops being simple.

Option 4: Centralize denial telemetry too

A good authz architecture does not only decide “allow” or “deny.” It also records:

- actor ID

- tenant ID

- action

- target resource type and ID

- reason for denial

- request ID / trace ID

- service and build version

That gives you a usable trail when someone starts probing object boundaries.

Our recent posts on forensics-ready APIs and the Digital Forensics & DFIR Triage topic category support exactly this mindset: authorization controls should leave evidence, not just status codes.

CI/CD tests to add so BOLA does not come back next sprint

This is where engineering teams usually separate from policy decks.

A real BOLA fix is not complete until it is guarded by tests.

1) Negative integration tests for cross-tenant access

Every sensitive object type should have at least one test where User A tries to access User B’s object.

it("denies cross-tenant invoice access", async () => {

const res = await request(app)

.get(`/v1/invoices/${tenantBInvoiceId}`)

.set("Authorization", `Bearer ${tenantAToken}`);

expect([403, 404]).toContain(res.statusCode);

});2) Role matrix tests

Define the expected actions for each role and verify them automatically.

const cases = [

["billing.user", "invoice.read", 200],

["billing.user", "invoice.export", 403],

["billing.admin", "invoice.export", 200],

["support.agent", "invoice.read", 403]

];These tests catch quiet privilege creep.

3) Sequential and neighbor-object tests

If your IDs are guessable or partially predictable anywhere, add tests that try:

- previous object ID

- next object ID

- same object in another tenant

- object cloned from another environment

- soft-deleted object

- archived object

4) Async authorization tests

Do not stop at HTTP endpoints. Add tests for workers and consumers.

Example check:

- enqueue event with valid actor but foreign-tenant object

- ensure worker rejects it

- ensure denial is logged

- ensure downstream side effects do not occur

5) Contract tests for auth context propagation

If service B depends on actor or tenant context from service A, test that the required fields exist and are not silently dropped.

Minimum contract:

{

"request_id": "required",

"actor.user_id": "required",

"actor.tenant_id": "required",

"action": "required",

"target.resource_id": "required"

}6) CI gate for authorization regression tests

A lightweight GitHub Actions example:

name: authz-regression

on: [push, pull_request]

jobs:

authz-tests:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v4

- uses: actions/setup-node@v4

with:

node-version: 20

- run: npm ci

- run: npm test -- authzThat is basic, but it is already better than relying on manual QA to notice broken object level authorization.

7) Snapshot tests for audit events on deny paths

If a deny path stops emitting telemetry, investigations get harder even when access is blocked correctly.

expect(logEvent).toMatchObject({

event: "authz.denied",

action: "invoice.read",

actor: { user_id: "u_123" },

target: { resource_type: "invoice" }

});For teams already building pipeline guardrails, our 5 Proven CI Gates for API Security: OPA Rules You Can Ship is an adjacent internal read.

Use automation for exposure mapping, not as a substitute for authorization testing

Scanners are useful. They help you inventory exposed routes, obvious misconfigurations, and reachable attack surface. They can support triage.

They do not prove that your authorization model is sound.

BOLA is deeply contextual. It depends on:

- who the actor is

- what tenant or org they belong to

- what relationship they have to the object

- what action they are trying to perform

- whether the object is in a special state

- whether a downstream service re-checks the decision

That is why BOLA testing needs scenario-based API pentesting, not only endpoint enumeration.

Free Website Vulnerability Scanner tool by Pentest Testing Corp:

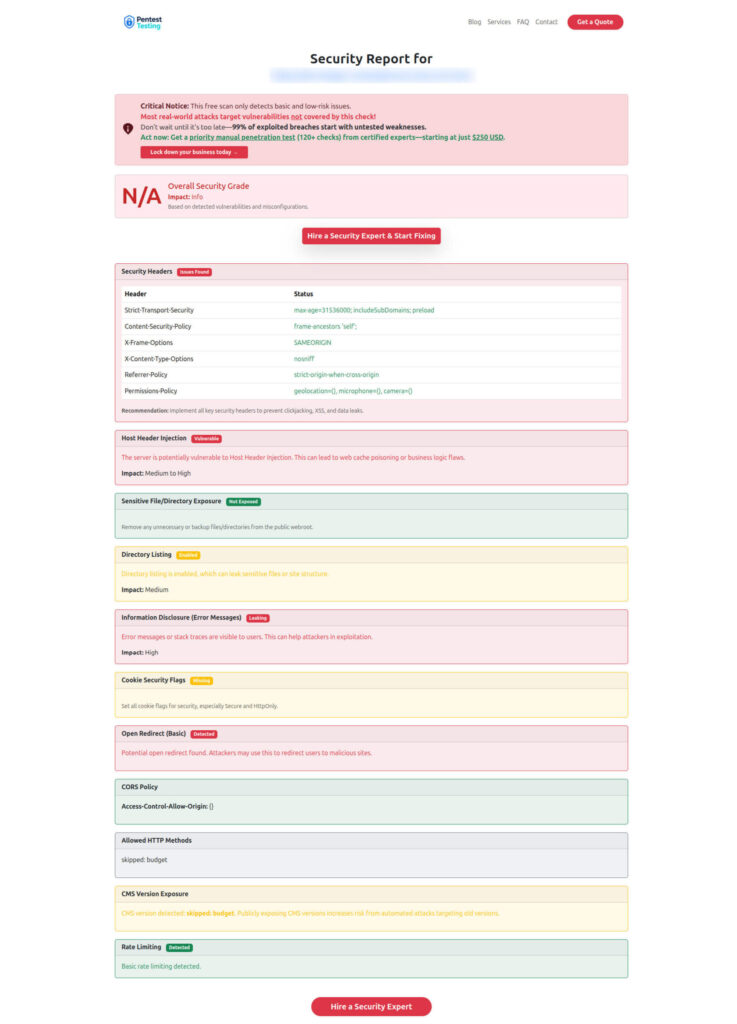

What a useful BOLA report should show

A weak report says, “IDOR found on endpoint X.”

A useful report tells engineering exactly how to fix the control.

It should show:

- affected endpoint and method

- actor role and tenant used in the test

- target object type and ownership mismatch

- reproducible request and response evidence

- whether the issue is read, write, delete, export, or approval impact

- root cause category

- precise remediation pattern

- regression tests to add

- whether abuse is visible in logs and traces

Sample report by the tool to check Website Vulnerability:

How engineering teams actually close BOLA fast

If you want a practical implementation order, use this one:

- Inventory high-value object types: invoices, orders, users, tickets, exports, webhooks, secrets, projects, reports.

- For each object type, define allowed actions by actor and tenant context.

- Move ownership and tenant scoping into the data-access pattern.

- Centralize policy decisions instead of scattering

ifstatements. - Add negative tests for cross-tenant and cross-user access.

- Add deny-path telemetry with request and trace correlation.

- Re-test async flows, support tooling, and admin surfaces.

- Run manual API pentesting against the real object model.

That is also the point where it makes sense to bring in API Penetration Testing Services or, where the same business logic crosses browser flows and APIs, Web Application Penetration Testing. Our more related, deeper engineering reads include Preventing Broken Access Control in RESTful APIs, Session Token Security in Modern SaaS, and 9 Powerful Asynchronous System Security Fixes.

Validate authorization logic with structured API pentesting

The fastest way to find a BOLA API vulnerability is to stop asking only, “Is the user logged in?” and start testing, “Can this exact actor perform this exact action on this exact object under this exact state?”

That is the difference between authentication coverage and authorization assurance.

If your team is fixing object-level access issues now, use a combination of:

- secure query patterns

- centralized policy checks

- denial telemetry

- CI/CD regression tests

- human-led API pentesting against real business flows

That is how engineering teams actually fix BOLA instead of rediscovering it six weeks later in another endpoint.

🔐 Frequently Asked Questions (FAQs)

Find answers to commonly asked questions about BOLA API Vulnerability.