9 Powerful Asynchronous System Security Fixes

Asynchronous workflows are the backbone of modern distributed systems: event-driven microservices, background jobs, ETL, notifications, billing, and “eventual consistency” everything. But the security model often lags behind the architecture. Teams lock down the API gateway, enforce SSO, add WAF rules—then quietly trust the queue.

That’s where incidents hide.

If you’re using Kafka, SQS, NATS, or RabbitMQ, this guide is a practical blueprint for asynchronous system security—with copy-paste patterns for identity, authorization, schema validation, replay defense, monitoring, and async observability across service boundaries.

Infrastructure as Code Security: 9 Powerful Guardrails to Stop Cloud Misconfigurations Before Deployment (Terraform, Kubernetes, CloudFormation + OPA/Sentinel/Checkov).

https://www.cybersrely.com/infrastructure-as-code-security-guardrails/

The Threat Model: What Goes Wrong in Message/Event Systems

When asynchronous systems fail, they fail in a few predictable ways:

Common attack paths (real-world patterns)

- Producer spoofing: a compromised workload produces “legitimate” events (e.g.,

role_granted,payout_approved). - Consumer impersonation: unauthorized services subscribe to sensitive topics/queues.

- Over-broad topic/queue permissions: “read/write everything” becomes a lateral-movement superpower.

- Poison messages & deserialization exploits: one crafted payload crashes workers or triggers unsafe code paths.

- Replay & re-drive abuse: old events get reprocessed (intentionally or accidentally) to re-trigger side effects.

- Ordering assumptions break: “we assumed order” becomes a data integrity and authorization issue.

- Telemetry blind spots: no trace continuity, no actor context, no evidence chain.

Treat your event broker as a security boundary, not just plumbing.

Fix 1) Enforce Strong Identity for Producers and Consumers

Goal: every producer/consumer has a verifiable identity that cannot be trivially spoofed.

Recommended identity model

- Workload identity per service (not per cluster).

- mTLS for transport identity where possible.

- Short-lived tokens (JWT/OIDC-style) for application identity, bound to workload identity.

Kafka (example: TLS + SASL + ACL foundations)

Broker-side (conceptual):

- TLS encryption enabled

- SASL mechanism enabled (SCRAM/OAUTHBEARER)

- Authorizer enabled for ACL enforcement

Client-side (Java, SASL/SCRAM example):

security.protocol=SASL_SSL

sasl.mechanism=SCRAM-SHA-512

sasl.jaas.config=org.apache.kafka.common.security.scram.ScramLoginModule required \

username="svc-orders-producer" password="${KAFKA_SCRAM_PASSWORD}";

ssl.truststore.location=/etc/ssl/truststore.jks

ssl.truststore.password=${TRUSTSTORE_PASSWORD}RabbitMQ (TLS + least-privileged users)

Create a service user with minimal permissions (vhost-scoped):

rabbitmqctl add_user svc_orders_consumer "$RABBIT_PASS"

rabbitmqctl add_vhost /orders

rabbitmqctl set_permissions -p /orders svc_orders_consumer "^orders\." "^orders\." "^$"NATS (accounts + user JWT, high-level pattern)

In NATS, use accounts for isolation and user/service JWTs for controlled access. Treat each service as its own principal with scoped publish/subscribe permissions.

Fix 2) Authorization Patterns That Actually Scale

Goal: producers can only publish what they should; consumers can only read what they should—by design.

Proven patterns for secure message queues

- Topic-per-domain + strict ACLs (

orders.*,billing.*) - Command vs event separation

- Commands (high privilege, validated, authenticated)

- Events (append-only facts, immutable)

- ABAC via claims (tenant, environment, service role)

- Deny-by-default on new topics/queues

AWS SQS (IAM policy example)

Producer allowed to send to one queue only:

{

"Version":"2012-10-17",

"Statement":[

{

"Sid":"AllowSendOnlyOrdersQueue",

"Effect":"Allow",

"Action":["sqs:SendMessage"],

"Resource":["arn:aws:sqs:REGION:ACCOUNT_ID:orders-queue"]

}

]

}Kafka ACLs (principle-focused model)

- Producers:

WRITEto exact topics - Consumers:

READonly where needed, plus group restrictions - Admin actions locked to break-glass identities only

Rule of thumb: if your queue permissions fit on one slide, you’re probably doing it right.

Fix 3) Schema Validation + Versioning + Safe Deserialization

Goal: consumers never “blindly execute” or “blindly parse” untrusted messages.

Use a secure event envelope (recommended)

Make every message look like this (even if the payload varies):

{

"event_id": "01JH9R7N9YJ0J3Y0A4NQ9Z6K8X",

"event_type": "orders.created",

"schema_version": 3,

"occurred_at": "2026-03-05T10:12:33Z",

"producer": "svc-orders",

"tenant_id": "t_123",

"traceparent": "00-4bf92f3577b34da6a3ce929d0e0e4736-00f067aa0ba902b7-01",

"payload": { "order_id": "o_789", "amount": 129.50, "currency": "USD" }

}JSON Schema validation (Node.js with AJV)

import Ajv from "ajv";

const ajv = new Ajv({ allErrors: true, removeAdditional: "all" });

const envelopeSchema = {

type: "object",

required: ["event_id", "event_type", "schema_version", "occurred_at", "producer", "payload"],

additionalProperties: false,

properties: {

event_id: { type: "string", minLength: 10, maxLength: 64 },

event_type: { type: "string", pattern: "^[a-z0-9]+(\\.[a-z0-9]+)+$" },

schema_version: { type: "integer", minimum: 1, maximum: 999 },

occurred_at: { type: "string" }, // enforce ISO8601 in stricter schema if needed

producer: { type: "string", minLength: 3, maxLength: 64 },

tenant_id: { type: "string" },

traceparent: { type: "string" },

payload: { type: "object" }

}

};

const validate = ajv.compile(envelopeSchema);

export function parseAndValidate(raw) {

const msg = JSON.parse(raw); // parse first

if (!validate(msg)) {

const err = new Error("Invalid event envelope");

err.details = validate.errors;

throw err; // fail closed

}

return msg;

}Safe deserialization (Python with Pydantic)

from pydantic import BaseModel, Field

from typing import Any, Dict, Optional

class Envelope(BaseModel):

event_id: str = Field(min_length=10, max_length=64)

event_type: str

schema_version: int = Field(ge=1, le=999)

occurred_at: str

producer: str

tenant_id: Optional[str] = None

traceparent: Optional[str] = None

payload: Dict[str, Any]

def parse_envelope(raw: bytes) -> Envelope:

# Avoid unsafe formats. Prefer JSON/Protobuf over pickle/yaml.

return Envelope.model_validate_json(raw)Versioning strategy that avoids outages

- Backward-compatible reads (new consumers can read old events)

- Forward-compatible tolerance (ignore unknown fields)

- Schema gates in CI (block breaking changes before they ship)

This is the heart of event broker hardening: schema discipline is security.

Fix 4) Replay Protection That Survives Real Operations

Goal: an attacker (or a misconfigured re-drive) can’t re-trigger side effects.

Add a replay defense header set

event_id(unique)occurred_at(timestamp)nonce(optional)signature(HMAC or asymmetric)

HMAC signing (producer) + verification (consumer)

Producer (Node.js):

import crypto from "crypto";

export function signEnvelope(envelope, secret) {

const body = JSON.stringify(envelope);

const sig = crypto.createHmac("sha256", secret).update(body).digest("hex");

return { body, sig };

}Consumer (Node.js):

import crypto from "crypto";

export function verify(body, sig, secret) {

const expected = crypto.createHmac("sha256", secret).update(body).digest("hex");

return crypto.timingSafeEqual(Buffer.from(sig), Buffer.from(expected));

}Timestamp window + nonce cache (simple, effective)

Consumer replay guard (Python pseudo):

import time

ALLOWED_SKEW_SECONDS = 300 # 5 minutes

def reject_if_old(occurred_at_unix: int):

now = int(time.time())

if abs(now - occurred_at_unix) > ALLOWED_SKEW_SECONDS:

raise Exception("Replay window exceeded")

def reject_if_seen_before(event_id: str, cache) -> None:

# cache can be Redis: SETNX with TTL

if not cache.setnx(f"seen:{event_id}", "1"):

raise Exception("Duplicate event_id (possible replay)")

cache.expire(f"seen:{event_id}", 86400)Fix 5) Idempotency for “Exactly Once” Outcomes

“Exactly once” delivery is not the same as exactly once side effects.

The practical model

- At-least-once delivery (common)

- Idempotent consumers (required)

- Transactional writes where it matters

Database uniqueness: the cleanest idempotency anchor

CREATE TABLE processed_events (

event_id TEXT PRIMARY KEY,

processed_at TIMESTAMP NOT NULL DEFAULT NOW()

);Consumer flow (pseudo):

- Insert

event_idintoprocessed_events - If insert fails (duplicate), stop

- Apply side effects

- Commit

That single pattern removes an entire class of async abuse.

Fix 6) Ordering Guarantees You Can Defend

If your security logic depends on ordering, you must engineer ordering, not hope for it.

Kafka ordering (partition key discipline)

- Choose a stable partition key (e.g.,

tenant_idororder_id) - Do not mix unrelated entities in one ordered stream

Producer (pseudo):

producer.send({

topic: "orders.events",

messages: [{ key: orderId, value: JSON.stringify(envelope) }]

});SQS FIFO ordering (MessageGroupId)

import boto3, json

sqs = boto3.client("sqs")

sqs.send_message(

QueueUrl=ORDERS_FIFO_URL,

MessageBody=json.dumps(envelope),

MessageGroupId=envelope["payload"]["order_id"],

MessageDeduplicationId=envelope["event_id"]

)Ordering is a distributed workflow protection: it prevents state confusion, double-apply bugs, and “late privilege” weirdness.

Fix 7) Monitoring + Alerting on Queue Anomalies and Attack Signals

Goal: detect abuse patterns early—before customers do.

Security-relevant queue signals

- Auth failures / ACL denies (producer or consumer)

- Sudden spikes in message size

- DLQ rate increase / redrive storms

- Consumer lag anomalies (flatline or runaway)

- Topic/queue creation events

- Permission changes (ACL/IAM) outside change windows

Prometheus-style alert rule examples

groups:

- name: async-security

rules:

- alert: DLQSpike

expr: rate(queue_dlq_messages_total[5m]) > 5

for: 10m

labels:

severity: high

annotations:

summary: "DLQ spike: possible poison messages or schema break"

- alert: AuthzDenySpike

expr: rate(queue_authz_denies_total[5m]) > 20

for: 5m

labels:

severity: high

annotations:

summary: "AuthZ denies spiking: possible credential misuse or ACL drift"Add “security canaries” to async paths

Intentionally emit a small set of known test events. Alert if:

- they’re not consumed

- they’re consumed by the wrong consumer

- they appear in a DLQ

- they’re processed too slowly

Fix 8) Async Observability: Trace Propagation Across Boundaries

Async architectures break tracing unless you carry context in the message.

Minimum trace fields to propagate

traceparent(W3C)request_idtenant_idactor_id(stable ID, avoid PII)

Produce with trace context (Node.js)

import { context, trace, propagation } from "@opentelemetry/api";

export function injectTrace(envelope) {

const carrier = {};

propagation.inject(context.active(), carrier);

// carrier might contain "traceparent"

return { ...envelope, traceparent: carrier.traceparent };

}Consume and continue the trace (Node.js)

import { context, propagation } from "@opentelemetry/api";

export function extractAndRun(envelope, fn) {

const ctx = propagation.extract(context.active(), { traceparent: envelope.traceparent });

return context.with(ctx, fn);

}This is how async observability becomes real observability, not disconnected logs.

Fix 9) Forensic Evidence Capture for Distributed Event Chains

When an incident spans queues, you need answers like:

- Which identity produced the event?

- What payload version was processed?

- Which consumer instance handled it?

- What changed in state, and when?

- Can we prove the event chain wasn’t tampered with?

Capture a “forensics-ready event record”

Store (securely) a structured record per processed event:

- event envelope (validated)

- broker metadata (topic/queue, partition, offset/message-id)

- consumer identity + version (

build_id,commit_sha) - auth context (service principal, tenant, environment)

- processing outcome (success/fail + reason)

Add a simple hash chain (tamper-evident)

import hashlib, json

def sha256(s: str) -> str:

return hashlib.sha256(s.encode("utf-8")).hexdigest()

def chain_record(prev_hash: str, record: dict) -> dict:

body = json.dumps(record, sort_keys=True, separators=(",", ":"))

record_hash = sha256(prev_hash + body)

return {"prev_hash": prev_hash, "record": record, "record_hash": record_hash}For deeper investigations, you’ll often want expert help to preserve and analyze evidence across systems. If you need a formal investigation workflow, see:

- Digital Forensic Analysis Services: https://www.pentesttesting.com/digital-forensic-analysis-services/

- Risk Assessment Services: https://www.pentesttesting.com/risk-assessment-services/

- Remediation Services: https://www.pentesttesting.com/remediation-services/

Implementation Checklist: Secure Message Queues in 30–90 Days

Week 1–2 (fast baseline)

- Inventory brokers, topics/queues, and consumers

- Remove wildcard permissions (“*” access)

- Enforce TLS everywhere

- Add event envelope + validation gates for top 3 streams

Week 3–6 (hardening)

- Workload identity + scoped authZ per service

- Idempotency keys + duplicate detection

- Replay controls (timestamp window + event_id cache)

- DLQ strategy + poison message handling

Week 7–12 (maturity)

- Trace propagation across async boundaries

- Security canaries + anomaly detection

- Forensics-ready event records + retention policy

- Run incident tabletop: “replay abuse” + “producer compromise”

If you want a structured, audit-friendly plan (especially for regulated environments), start with a risk assessment, then execute remediation with evidence artifacts:

- https://www.pentesttesting.com/risk-assessment-services/

- https://www.pentesttesting.com/remediation-services/

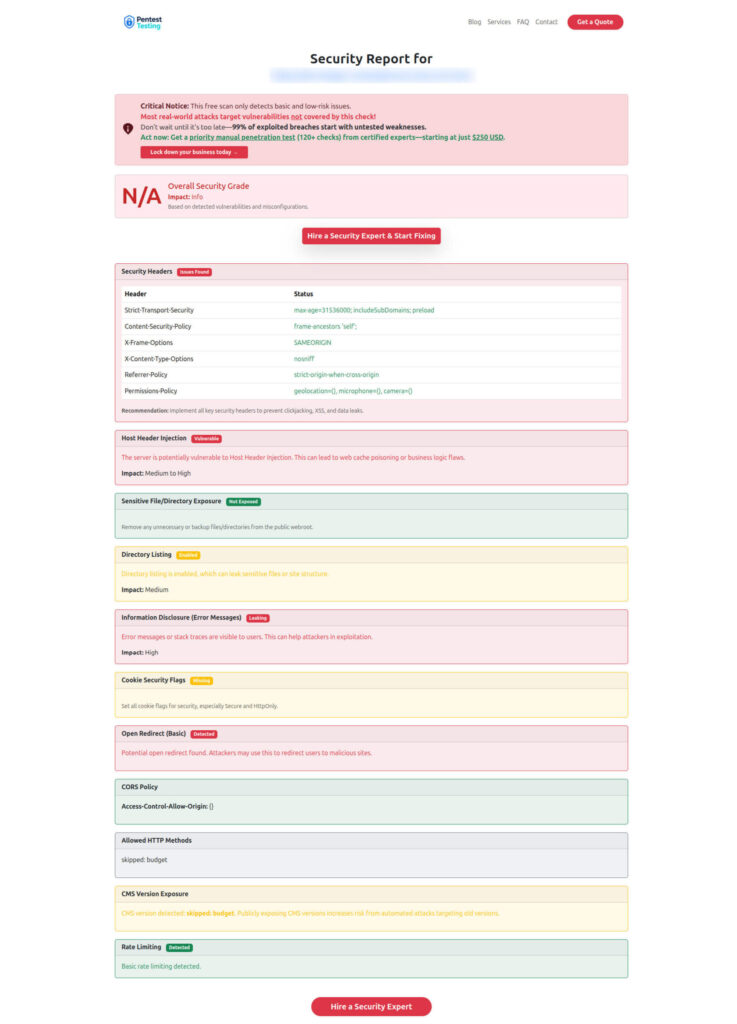

Where Our Free Tool Fits

Even if your main risk is inside queues, most compromises still start from the outside: exposed apps, weak endpoints, missing headers, vulnerable dependencies, or misconfigurations that lead to credential theft.

Free Website Vulnerability Scanner tool page

Sample assessment report to check Website Vulnerability

Recent Cyber Rely Reads to Pair With This Guide

If you’re implementing asynchronous system security, these recent playbooks pair naturally with queue hardening and distributed evidence capture:

- https://www.cybersrely.com/secure-deployments-guardrails-forensics-ready/

- https://www.cybersrely.com/secure-observability-pipeline/

- https://www.cybersrely.com/feature-flag-security-safe-canary-rollouts/

- https://www.cybersrely.com/observability-for-security-telemetry-enrichment/

- https://www.cybersrely.com/secure-feature-flags-prevent-abuse/

- https://www.cybersrely.com/forensics-ready-telemetry/

- https://www.cybersrely.com/supply-chain-ci-hardening-2026/

🔐 Frequently Asked Questions (FAQs)

Find answers to commonly asked questions about Asynchronous System Security.