Elevating Observability to Security: Merging Metrics, Traces, and Threat Context

Modern teams already have “observability”: dashboards, traces, uptime alerts, and plenty of logs. But when a real incident hits—account abuse, API key theft, privilege escalation, data export—you quickly learn an uncomfortable truth:

Operational observability ≠ security insight.

The good news: you don’t need a second parallel telemetry stack. You can turn the signals you already generate (metrics + traces + logs) into observability for security by adding the missing ingredient: threat context.

This guide is developer-first and implementation-heavy. You’ll get copy/paste patterns for:

- ingesting the right signals (without logging secrets),

- enriching telemetry with identity + geo anomalies + risk scores,

- correlating traces with audit events,

- building detection pipelines and “security lenses” in dashboards,

- wiring security observability into CI/CD gates,

- making your telemetry forensics-ready (audit trails + evidence preservation).

Want a practical playbook for runtime configuration hardening? Read our latest guide on feature flag security and safe canary rollouts with rollout governance, kill switches, and feature rollout telemetry: https://www.cybersrely.com/feature-flag-security-safe-canary-rollouts/

1) Observability vs. Security Telemetry: different goals, overlapping signals

Observability answers:

- Is the service healthy?

- Where is latency coming from?

- Which dependency is failing?

Security telemetry answers:

- Who did what, when, from where?

- Was it allowed by policy?

- What changed (and can we prove it)?

The overlap is your advantage. Metrics can show abusive patterns. Traces can show blast radius and propagation. Logs (especially structured audit logs) can show intent and identity. The trick is to standardize the “security shape” of your telemetry so it becomes queryable and defensible.

A practical mental model

Service health signals (SRE) + high-value security events (who/what/where) + context (risk/geo/identity)

→ observability-driven detection and faster incident response.

2) Essential signals to ingest (and the fields teams forget)

If you want “developer security observability” that actually works, start by capturing consistent identifiers and high-value metadata.

Minimum viable fields (every request)

request_id(stable per request)trace_id/span_idservice,env,version(build identity)route,method,status_codelatency_msactor(user/service),tenant_id(if multi-tenant)auth_method(session, JWT, API key, SSO),token_id(hashed),mfa(true/false when available)src_ip(careful with privacy),user_agent(trimmed),geo(derived)- result (success/failure) +

reasonfor authz denials (not raw exception dumps)

“Don’t do this”

- Don’t log raw passwords, tokens, API keys, session cookies.

- Don’t dump full headers or bodies into logs “for debugging”.

- Don’t use free-form strings for audit events.

Instead, use security context logs: structured, minimal, queryable.

3) Start with a canonical security event schema (version it)

A schema gives you: consistency, correlation, and sane querying across services.

{

"schema": "audit.v1",

"ts": "2026-02-23T12:34:56.789Z",

"service": "billing-api",

"env": "prod",

"version": "git:9f3c2a1",

"event": "auth.login",

"severity": "info",

"result": "failure",

"reason": "invalid_password",

"request": {

"request_id": "req_01J0...",

"trace_id": "3b1c2d...",

"method": "POST",

"route": "/v1/login"

},

"actor": {

"type": "user",

"id": "u_12345",

"tenant_id": "t_900",

"roles": ["member"]

},

"client": {

"ip": "203.0.113.10",

"ua": "Mozilla/5.0",

"geo": { "country": "US", "region": "CA", "city": "San Jose" }

},

"security": {

"auth_method": "password",

"mfa": false,

"risk_score": 72,

"signals": ["geo_anomaly", "password_spray_suspected"]

}

}JSON Schema check (CI gate)

Make your schema non-optional: validate in CI before deploy.

# example: validate audit events in fixtures against schema

node scripts/validate-audit-events.js// scripts/validate-audit-events.js (minimal)

import fs from "fs";

import Ajv from "ajv";

const ajv = new Ajv({ allErrors: true });

const schema = JSON.parse(fs.readFileSync("schemas/audit.v1.json", "utf8"));

const validate = ajv.compile(schema);

const fixtures = JSON.parse(fs.readFileSync("test/fixtures/audit-events.json", "utf8"));

let ok = true;

for (const evt of fixtures) {

if (!validate(evt)) {

ok = false;

console.error("Invalid event:", evt.event, validate.errors);

}

}

process.exit(ok ? 0 : 1);This one step prevents “we can’t query anything during an incident” later.

4) Instrument request correlation (request_id + trace context)

Node.js (Express) middleware: request_id + safe header capture

import crypto from "crypto";

export function requestContext(req, res, next) {

const requestId = req.header("x-request-id") || `req_${crypto.randomUUID()}`;

res.setHeader("x-request-id", requestId);

// Only keep a safe allowlist of headers (no auth tokens)

const safeHeaders = {

"x-forwarded-for": req.header("x-forwarded-for"),

"user-agent": req.header("user-agent"),

"x-correlation-id": req.header("x-correlation-id")

};

req.ctx = {

requestId,

route: req.path,

method: req.method,

safeHeaders

};

next();

}Add security context to logs (without leaking secrets)

export function auditLog(logger, event) {

// Defensive defaults

const safe = {

schema: "audit.v1",

ts: new Date().toISOString(),

service: process.env.SVC_NAME,

env: process.env.ENV,

version: process.env.BUILD_ID,

...event

};

logger.info(safe);

}Python (FastAPI): structured audit events

from fastapi import FastAPI, Request

import time, uuid, json

app = FastAPI()

def audit(event: dict):

event.setdefault("schema", "audit.v1")

print(json.dumps(event, separators=(",", ":")))

@app.middleware("http")

async def ctx_mw(request: Request, call_next):

rid = request.headers.get("x-request-id") or f"req_{uuid.uuid4()}"

start = time.time()

response = await call_next(request)

latency_ms = int((time.time() - start) * 1000)

audit({

"ts": time.strftime("%Y-%m-%dT%H:%M:%S%z"),

"service": "api",

"env": "prod",

"event": "http.request",

"result": "success" if response.status_code < 400 else "failure",

"request": {"request_id": rid, "method": request.method, "route": request.url.path},

"http": {"status_code": response.status_code, "latency_ms": latency_ms}

})

response.headers["x-request-id"] = rid

return response5) Threat enrichment: identity, geo anomalies, risk scores

This is where “observability for security” becomes real. You don’t just see a spike—you see who, from where, and how risky it is.

Enrichment strategy (recommended)

- Identity context: user_id, tenant_id, roles, auth method, MFA present?

- Geo context: country/region derived from IP (via your internal resolver)

- Risk score: computed from signals (velocity, impossible travel, auth failures, new device, sensitive route)

- Normalization: emit the enrichment back into logs/traces as fields

Example: a tiny enrichment function (language-agnostic)

def risk_score(signals: dict) -> int:

score = 0

if signals.get("new_device"): score += 15

if signals.get("geo_anomaly"): score += 25

if signals.get("auth_fail_burst"): score += 30

if signals.get("sensitive_route"): score += 10

if signals.get("no_mfa") and signals.get("admin_role"): score += 20

return min(score, 100)Add enrichment to traces (security tags on spans)

Even if you already trace performance, add a “security lens”:

// pseudo-code pattern (works conceptually across OTel SDKs)

span.setAttribute("enduser.id", ctx.userId);

span.setAttribute("tenant.id", ctx.tenantId);

span.setAttribute("auth.method", ctx.authMethod);

span.setAttribute("security.risk_score", ctx.riskScore);

span.setAttribute("security.geo_country", ctx.geoCountry);

span.setAttribute("security.signals", ctx.signals.join(","));Now you can answer: “Show me the trace waterfall for risky requests only.”

6) Correlation and baselining: tie anomalies to service health + policy

Security incidents often hide inside “normal” operational noise. Baselining helps you detect when security-relevant behaviors drift.

What to baseline (high signal, low noise)

- auth failures per IP / per user / per tenant

- token refresh frequency

- permission denials by route

- export/download events

- admin actions

- rate of 4xx/5xx on sensitive endpoints

- new device / new geo per account

Example: “impossible travel” baseline (simple)

def impossible_travel(prev_geo, new_geo, minutes_between):

# very rough: replace with your own internal geo distance logic

if prev_geo["country"] != new_geo["country"] and minutes_between < 30:

return True

return FalseCorrelate security events with performance regressions

Sometimes abuse causes latency. Sometimes latency causes retries that look like abuse. Correlate both.

Example query idea (SQL-like)

-- find risky auth attempts that also correlate with unusual latency

SELECT e.actor_id, e.client_ip, e.security_risk_score, r.latency_ms

FROM audit_events e

JOIN request_metrics r ON r.request_id = e.request_id

WHERE e.event = 'auth.login'

AND e.security_risk_score >= 70

AND r.latency_ms >= 1500

ORDER BY e.ts DESC

LIMIT 200;7) Detection pipelines: thresholds, adaptive models, and dashboard “security lenses”

Start with thresholds (ship this week)

auth_fail_burst: > N failures per IP per 5 minutesadmin_action_from_new_geoexport_event_rate_spikepermission_denied_spikeon privileged routes

Prometheus-style alert rule example

groups:

- name: security-observability

rules:

- alert: AuthFailureBurstByIP

expr: sum(rate(auth_login_fail_total[5m])) by (src_ip) > 10

for: 10m

labels:

severity: high

annotations:

summary: "Auth failure burst (possible spray) from {{ $labels.src_ip }}"Then add “adaptive” baselines (without going full ML platform)

A practical approach: z-scores per tenant / per route.

import math

def z_score(x, mean, std):

if std <= 0:

return 0.0

return (x - mean) / std

# flag anomaly if z > 3 (tune per signal)Build a “security lens” in your dashboards

Instead of building brand-new dashboards, add filters:

security.risk_score >= 70event in (admin.change_role, data.export, token.create)geo_anomaly = trueauth.method = api_key+route = /v1/export

This keeps the developer workflow intact: same tools, smarter slices.

8) Developer workflows: integrate observability tooling with CI/CD gates

Security observability fails when it’s “someone else’s job.” Make it part of engineering definition of done.

CI gate: required audit fields on sensitive routes

python3 scripts/check_audit_coverage.py --routes "/v1/export,/v1/admin/*# scripts/check_audit_coverage.py (sketch)

import sys, json

SENSITIVE = set(sys.argv[sys.argv.index("--routes")+1].split(","))

events = json.load(open("telemetry-fixtures.json"))

missing = []

for e in events:

route = e.get("request", {}).get("route", "")

if any(route.startswith(r.replace("*","")) for r in SENSITIVE):

for field in ["actor", "client", "security"]:

if field not in e:

missing.append((route, field, e.get("event")))

if missing:

for m in missing:

print("Missing", m)

sys.exit(1)

print("Audit coverage OK")Policy-as-code gate (Rego-style) for telemetry rules

Use policy gates to block releases that remove critical security context.

package telemetry.guardrails

deny[msg] {

input.change.kind == "telemetry"

input.change.removed_fields[_] == "actor.id"

msg := "Do not remove actor.id from audit events"

}

deny[msg] {

input.change.kind == "telemetry"

input.change.removed_fields[_] == "request.trace_id"

msg := "Do not remove trace_id correlation"

}9) Enabling incident readiness: audit trails + evidence preservation

Append-only audit table (Postgres example)

CREATE TABLE audit_events (

id BIGSERIAL PRIMARY KEY,

ts TIMESTAMPTZ NOT NULL,

schema TEXT NOT NULL,

service TEXT NOT NULL,

env TEXT NOT NULL,

event TEXT NOT NULL,

result TEXT NOT NULL,

request_id TEXT,

trace_id TEXT,

actor_id TEXT,

tenant_id TEXT,

client_ip INET,

risk_score INT,

payload JSONB NOT NULL

);

-- Optional: prevent updates/deletes at the DB role level (recommended)Simple tamper-resistance: hash chaining (easy, effective)

import hashlib, json

def hash_event(evt: dict, prev_hash: str) -> str:

body = json.dumps(evt, sort_keys=True, separators=(",", ":")).encode()

return hashlib.sha256(prev_hash.encode() + body).hexdigest()Now you can detect “history rewrites” in your audit trail.

Retention and access

- keep short “hot” retention for high-volume logs,

- keep longer retention for audit/security events,

- lock down access (least privilege),

- preserve evidence for incident response workflows.

If you want expert-led support from discovery → fix → incident readiness, these service paths align well:

10) Where the free scanner fits (fast signal before deeper work)

Even with strong security observability, you still need rapid exposure checks—especially when you suspect a web layer issue.

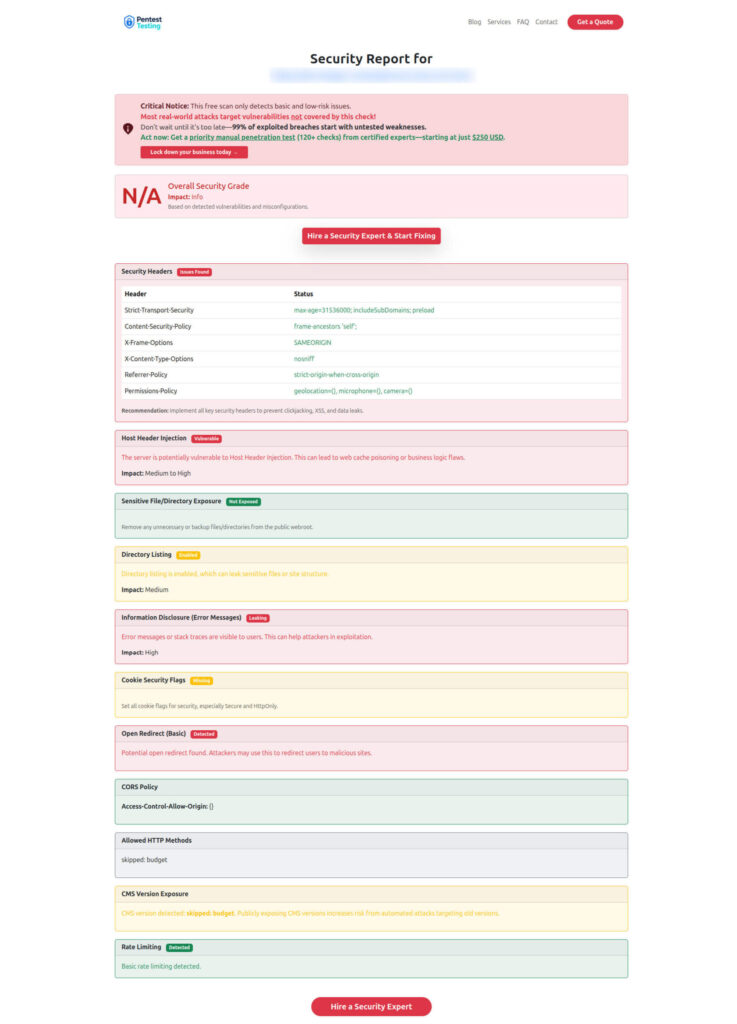

Use the Website Vulnerability Scanner as a quick front-door signal:

- validate obvious misconfigurations,

- catch common web weaknesses,

- generate a repeatable baseline report you can attach to remediation tickets.

Free Website Vulnerablity Scanner tool Dashboard

Sample report to check Website Vulnerability

Implementation checklist (copy/paste)

- Define

audit.v1schema and validate in CI - Enforce

request_id+trace_idpropagation - Emit high-value events (auth, admin, export, token lifecycle, permission checks)

- Add threat enrichment (geo, identity, risk scoring)

- Build baselines for abuse signals (per tenant/route)

- Add threshold alerts + dashboard security filters

- Add CI/CD telemetry guardrails (no removal of key fields)

- Store append-only audit events + retention + access controls

- Add tamper-resistance for evidence confidence

- Run periodic exposure checks with the free scanner and track drift

Related Cyber Rely reading (implementation-heavy)

If you’re building observability for security with forensics-ready foundations, these will pair well:

- 7 Powerful Forensics-Ready Telemetry Patterns

- 7 Proven Patterns for Forensics-Ready Microservices

- 9 Powerful Forensics-Ready APIs for Microservices

- 7 Powerful Forensics-Ready SaaS Logging Patterns

- 7 Proven Forensics-Ready CI/CD Pipeline Steps

- 6 Powerful Security Chaos Experiments for CI/CD

- 9 Powerful Secure Feature Flags to Stop Abuse

🔐 Frequently Asked Questions (FAQs)

Find answers to commonly asked questions about Observability for Security Works.