5 Proven Ways to Master Data Classification as Code

If your services handle PII, PHI, or cardholder data, you’ve probably felt this pain:

- Auditors asking where “all PII lives” while engineers grep for

emailandssn. - PCI DSS and SOC 2 controls talking about “cardholder data environments” and “logical access,” but your CI/CD only talks about builds and tests.

- Risk assessments and remediation plans living in spreadsheets, while your team ships from Git.

Data Classification as Code is how you close that gap.

Instead of treating data classification as a one-off Excel artifact, you describe sensitive data, flows, and required controls in YAML/JSON, keep it in Git, and wire it directly into CI/CD. That way:

- Risky changes to sensitive paths can trigger extra checks and approvals.

- External scanners and pentests focus on what actually matters.

- Compliance teams (and partners like Pentest Testing Corp) can turn CI output into audit-ready evidence for SOC 2, PCI DSS, ISO 27001, GDPR, and HIPAA.

If you’re responsible for building or owning AI systems in the EU, you may also want to read our deep-dive on how to turn regulatory requirements into code and CI checks: EU AI Act for Engineering Leaders: Turning High-Risk AI Systems into Testable, Auditable Code.

Below is a practical, code-heavy guide aimed at developers, DevOps/SRE, and engineering leaders building or running services that touch PII, PHI, or card data—especially if you’re already using Cyber Rely and Pentest Testing Corp for security services and assessments.

What is Data Classification as Code?

Data Classification as Code means:

- Your data classes (public, internal, confidential, restricted) and tags (PII, PHI, cardholder, secrets, logs) are machine-readable (YAML/JSON).

- Each service owns a small config file that lists data assets, endpoints, queues, and storage—plus the controls they must have (TLS, logging, tests, approvals).

- CI/CD jobs read those files and fail builds when sensitive pieces don’t meet the rules.

Instead of “security saying no” after the fact, classification becomes:

- Version-controlled (PRs, code review, history).

- Testable (CI jobs).

- Mappable to controls across PCI DSS, SOC 2, ISO 27001, GDPR, HIPAA. (pentesttesting.com)

In other words: classification stops being a slide deck and becomes an input to your pipelines.

The rest of this article walks through five proven ways to implement Data Classification as Code inside Cyber Rely–style SDLCs, with real snippets you can adapt.

1) Define a data-classification.yml for Every Service

Start with one small, per-service YAML file in your repo—treat it like docker-compose.yml or openapi.yml:

Example: data-classification.yml (billing service)

service: billing-api

owners:

team: payments

slack: "#team-payments"

jira_project: BILLING

data_levels:

- name: public

description: Public, marketing-safe information

- name: internal

description: Internal non-customer data

- name: confidential

description: Customer data with moderate impact

- name: restricted

description: Highly sensitive data (PII, PHI, card data, secrets)

assets:

- id: customer_db

type: postgres.table

location: "rds://billing-prod/customers"

classification:

level: restricted

tags: [pii, cardholder]

regulations: [pci_dss, gdpr]

retention: "7y"

encryption:

at_rest: true

in_transit: true

- id: invoice_pdf_s3

type: s3.bucket

location: "s3://billing-prod-invoices"

classification:

level: confidential

tags: [pii]

regulations: [gdpr]

routes:

- path: /api/v1/cards

method: POST

description: Tokenize and store card details

asset: customer_db

classification:

level: restricted

tags: [cardholder, pii]

requires:

tls_min: "TLS1.2"

mfa_for_admins: true

logging_enabled: true

no_pii_in_logs: true

tests:

- "tests/api/test_cards.py::test_pan_never_logged"

- path: /api/v1/invoices/{id}

method: GET

description: Download invoice PDF

asset: invoice_pdf_s3

classification:

level: confidential

tags: [pii]

requires:

tls_min: "TLS1.2"

logging_enabled: true

internet_exposure:

public_domain: "billing.example.com"

contains_pii: true

contains_cardholder: true

scan_profile: "external-high"A few tips:

- Keep it small: assets + routes + exposure is enough to start.

- Make

classification.levelandclassification.tagsmatch how your compliance team talks about card data (PCI DSS), personal data (GDPR), and PHI (HIPAA). - Store this file at the root (

data-classification.yml) or in/compliance/.

Once this exists for each service, you have a developer-owned map of where sensitive data lives and how it should be protected.

2) Wire Data Classification as Code into CI/CD Gates

Next, let CI enforce some simple but powerful rules:

- If

classification.levelisrestrictedorconfidential, then:- TLS must be enabled.

- Logging rules must be defined (

logging_enabled,no_pii_in_logs). - At least one test is listed in

requires.tests.

Python script: ci/check_classification.py

#!/usr/bin/env python3

import sys

import pathlib

import yaml # pip install pyyaml

CONFIG_PATH = pathlib.Path("data-classification.yml")

def main() -> int:

if not CONFIG_PATH.exists():

print("No data-classification.yml found, skipping check.")

return 0

data = yaml.safe_load(CONFIG_PATH.read_text())

errors: list[str] = []

for route in data.get("routes", []):

level = (route.get("classification") or {}).get("level", "internal")

path = route.get("path")

method = route.get("method", "GET")

# Only enforce strict rules on confidential/restricted

if level not in {"confidential", "restricted"}:

continue

requires = route.get("requires") or {}

if not requires.get("tls_min"):

errors.append(f"{method} {path}: missing tls_min for {level} data")

if not requires.get("logging_enabled"):

errors.append(f"{method} {path}: logging_enabled=false for {level} data")

if not requires.get("no_pii_in_logs"):

errors.append(f"{method} {path}: no_pii_in_logs not enforced")

tests = requires.get("tests") or []

if not tests:

errors.append(f"{method} {path}: no tests listed for high-sensitivity route")

if errors:

print("Data Classification as Code check failed:")

for err in errors:

print(" -", err)

return 1

print("Data Classification as Code check passed.")

return 0

if __name__ == "__main__":

raise SystemExit(main())GitHub Actions: block merges when rules aren’t met

# .github/workflows/data-classification.yml

name: data-classification-gates

on:

pull_request:

paths:

- "data-classification.yml"

- "src/**"

- "tests/**"

jobs:

classification:

runs-on: ubuntu-latest

steps:

- uses: actions/checkout@v4

- uses: actions/setup-python@v5

with:

python-version: "3.12"

- name: Install dependencies

run: pip install pyyaml

- name: Run Data Classification as Code checks

run: python ci/check_classification.pyThis does a few important things for PCI DSS, SOC 2, ISO 27001, and GDPR/HIPAA audits:

- Shows that changes to sensitive paths are gated by automated checks.

- Produces pipeline logs and artifacts that map cleanly to secure SDLC and logging requirements (PCI DSS 6/10, SOC 2 CC6/CC7, ISO 27001 A.8/A.12).

If you already use policy-as-code / CI gates (for example, from Cyber Rely’s guides on CI/CD compliance and API security), this becomes one more input into those gates rather than a separate process.

3) Scan Tagged Internet-Facing Assets and Push Findings with Labels

Once the YAML tells you which domains and APIs are internet-facing and hold PII/PHI/card data, you can:

- Focus external scans on those assets.

- Tag every finding with the same classification labels used in your YAML.

- Send that into GitHub/Jira with the right labels so triage is fast and audit-ready.

Script: export high-risk URLs to scan

#!/usr/bin/env python3

import pathlib

import yaml

data = yaml.safe_load(pathlib.Path("data-classification.yml").read_text())

urls_to_scan: list[str] = []

internet = data.get("internet_exposure") or {}

if internet.get("contains_pii") or internet.get("contains_cardholder"):

domain = internet.get("public_domain")

if domain:

urls_to_scan.append(f"https://{domain}")

for url in urls_to_scan:

print(url)You can wire this into your CI to produce a list of targets for your external scanner (including Cyber Rely’s preferred free Website Vulnerability Scanner at free.pentesttesting.com). (DEV Community)

Using the free Website Vulnerability Scanner in your workflow

At Cyber Rely and Pentest Testing Corp, we often recommend teams:

- Visit

https://free.pentesttesting.com/. - Paste the URLs exported from

data-classification.yml. - Run quick scans before major releases.

- Attach before/after reports to Jira/GitHub tickets as evidence artifacts.

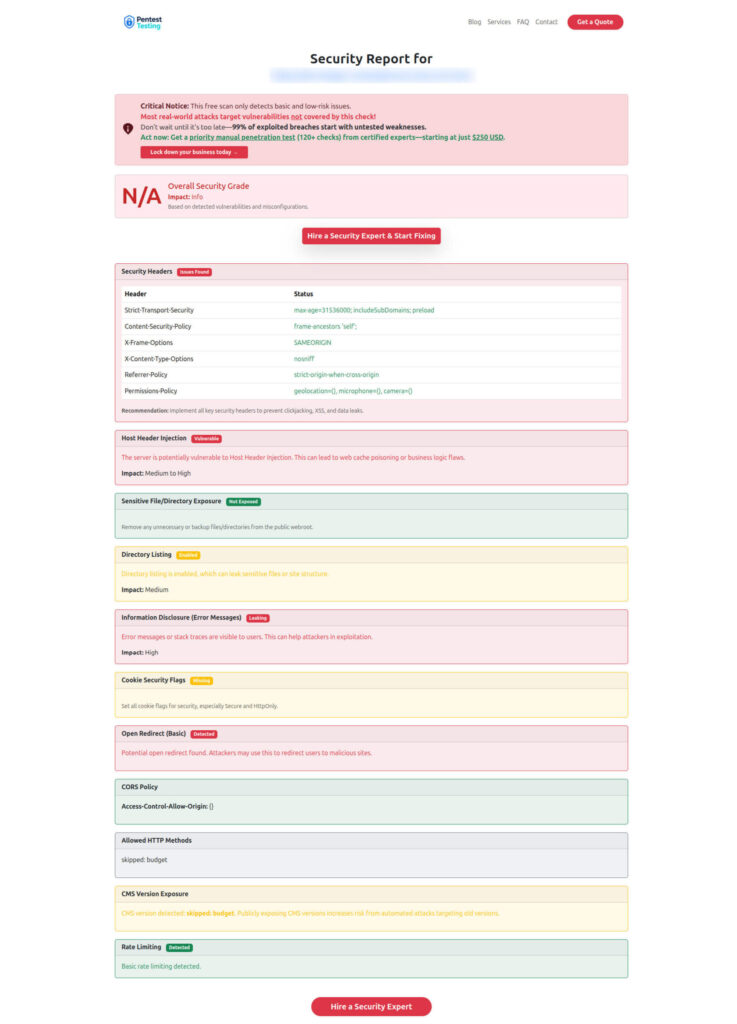

In your blog or internal docs, you can embed two screenshots around this step:

Tool homepage screenshot

Sample report screenshot to check Website Vulnerability

Script: enrich scanner results with classification labels

Assume your scanner exports a CSV like:

target,severity,id,title

https://billing.example.com,High,XSS-001,Reflected XSS on /pay

https://billing.example.com,Medium,HEADERS-002,Missing HSTSYou can merge this with data-classification.yml and produce JSON ready for GitHub/Jira:

#!/usr/bin/env python3

import csv

import json

import pathlib

import yaml

root = pathlib.Path(".")

classification = yaml.safe_load((root / "data-classification.yml").read_text())

internet = classification.get("internet_exposure") or {}

labels = []

if internet.get("contains_cardholder"):

labels.append("data:cardholder")

if internet.get("contains_pii"):

labels.append("data:pii")

out = []

with (root / "scan-results.csv").open() as f:

reader = csv.DictReader(f)

for row in reader:

out.append(

{

"summary": row["title"],

"target": row["target"],

"severity": row["severity"],

"scanner_id": row["id"],

"labels": labels + [

f"service:{classification.get('service','unknown')}",

"source:external-scan",

],

}

)

print(json.dumps(out, indent=2))You can then post this JSON to your issue tracker API so that every vulnerability on a high-sensitivity service arrives pre-labeled with:

- The service name.

- Data classification tags.

- Whether it came from an external scan.

That makes it trivial for risk/compliance teams (or Pentest Testing’s Risk Assessment Services) to filter and prioritize.

4) Drive Extra Approvals, Logging, and TLS from Classification

Now that your YAML can be trusted, you can use it to drive review rules and runtime behavior.

Generate CODEOWNERS entries from classification

If a route is restricted, you might want security + data owners automatically pulled in as reviewers.

#!/usr/bin/env python3

import pathlib

import yaml

data = yaml.safe_load(pathlib.Path("data-classification.yml").read_text())

owners = data.get("owners") or {}

team_slug = owners.get("team", "team-security")

restricted_paths: list[str] = []

for route in data.get("routes", []):

level = (route.get("classification") or {}).get("level")

if level == "restricted":

path = route.get("path")

# Map API path → folder pattern (adapt to your routing)

restricted_paths.append(f"src{path.replace('/api', '')}/*")

restricted_paths = sorted(set(restricted_paths))

with open("CODEOWNERS.generated", "w") as f:

for pattern in restricted_paths:

f.write(f"{pattern} @{team_slug}-owners\n")Then, in CI:

- Generate

CODEOWNERS.generated. - Validate that

CODEOWNERSincludes those lines (or import them automatically). - Combine with GitHub/GitLab’s branch protection rules to require approvals from

@team-payments-ownerswhenever restricted code changes.

Enforce logging and TLS at runtime

You can also have your services load classification at startup and assert that runtime config matches expectations:

Example (Node.js / Express middleware):

// config/dataClassification.js

const fs = require("fs");

const yaml = require("js-yaml");

function loadClassification() {

const raw = fs.readFileSync("data-classification.yml", "utf8");

return yaml.load(raw);

}

const classification = loadClassification();

function requireSecureLogging(req, res, next) {

const path = req.route?.path;

const method = req.method;

const sensitiveRoute = classification.routes?.find(

(r) => r.path === path && r.method === method

);

if (

sensitiveRoute &&

["confidential", "restricted"].includes(

sensitiveRoute.classification?.level

)

) {

// Example: attach a flag to logs so your logger avoids PII fields

req.context = req.context || {};

req.context.noPiiLogging = true;

}

return next();

}

module.exports = { requireSecureLogging };Wire this middleware early in your pipeline, then update your logger to drop PII fields whenever req.context.noPiiLogging is set.

This ties directly into:

- PCI DSS logging requirements (avoiding PAN in logs).

- GDPR / HIPAA obligations to minimize unnecessary exposure of personal data.

Because the rule is derived from data-classification.yml, you now have a line from classification → code → runtime that auditors can follow.

5) Close the Loop with Risk Assessments, Remediation & Evidence

At some point, a compliance team—or a partner like Pentest Testing Corp—will need to:

- Perform a formal risk assessment across PCI DSS, SOC 2, ISO 27001, GDPR, and HIPAA.

- Run remediation projects to close gaps and capture proof for auditors.

Data Classification as Code makes that much easier because you can export a risk-ready view straight from your YAML.

Script: export risk register rows

#!/usr/bin/env python3

import csv

import pathlib

import yaml

data = yaml.safe_load(pathlib.Path("data-classification.yml").read_text())

rows = []

for asset in data.get("assets", []):

cls = asset.get("classification") or {}

for reg in cls.get("regulations", []):

rows.append(

{

"service": data.get("service"),

"asset_id": asset["id"],

"asset_type": asset["type"],

"classification_level": cls.get("level"),

"regulation": reg,

"owner_team": data.get("owners", {}).get("team"),

}

)

with open("risk-register-export.csv", "w", newline="") as f:

writer = csv.DictWriter(

f,

fieldnames=[

"service",

"asset_id",

"asset_type",

"classification_level",

"regulation",

"owner_team",

],

)

writer.writeheader()

writer.writerows(rows)This CSV can go straight into:

- Your internal GRC / risk register.

- Pentest Testing Corp’s Risk Assessment Services intake form to accelerate scoping and gap analysis.

- Pentest Testing Corp’s Remediation Services can use your CI logs, scanner outputs, and YAML files as evidence when preparing you for certification or external audits.

On the Cyber Rely side, this approach complements existing guides on:

- Mapping CI/CD findings to SOC 2 and ISO 27001.

- Embedding compliance in CI/CD (feature flags, OPA rules, and PQC gates).

Data Classification as Code simply extends that pattern down to the data layer.

How This Helps with SOC 2, PCI DSS, GDPR, HIPAA & ISO 27001

Here’s how your Data Classification as Code setup maps to key frameworks:

- PCI DSS

classification.tags: [cardholder]andclassification.level: restrictedshow where the CDE is.- CI gates + TLS/logging checks provide evidence for secure SDLC and logging requirements.

- GDPR

tags: [pii]andregulations: [gdpr]support records of processing, data minimization, and DPIA inputs.- External scans focused on PII endpoints help prove ongoing risk management.

- HIPAA

tags: [phi]combined with strong logging/TLS rules support administrative and technical safeguards around PHI.

- SOC 2 / ISO 27001

- Version-controlled YAML + CI logs = evidence of logical access, change management, monitoring, and risk treatment controls in operation. (pentesttesting.com)

The key point: engineers never need to memorize every clause. They just maintain:

- A small YAML file per service.

- A handful of CI gates.

- A simple export for risk/compliance partners.

Cyber Rely and Pentest Testing Corp can then take those artifacts and package them into assessor-friendly evidence for your SOC 2, PCI DSS, ISO 27001, GDPR, and HIPAA journeys.

90-Day Rollout Plan for Data Classification as Code

If you want a concrete path, here’s a pragmatic 90-day approach:

Days 1–30 – Pilot on one critical service

- Pick a service handling cardholder data, PHI, or high-volume PII.

- Add

data-classification.ymlwith assets, routes, and internet exposure. - Add the classification CI job and fix initial failures.

- Run the free Website Vulnerability Scanner on its public URLs and attach sample reports to tickets.

Days 31–60 – Roll out to Tier-1 services

- Copy the pattern to remaining Tier-1 services (payments, accounts, auth, key data hubs).

- Generate CODEOWNERS entries from classification to tighten approvals.

- Start exporting

risk-register-export.csvand feed it into your risk/compliance workflow or Pentest Testing’s Risk Assessment Services.

Days 61–90 – Integrate with formal compliance

- Map classification tags to specific risks and controls (with your compliance team or Pentest Testing’s Remediation Services).

- Align your CI evidence with Cyber Rely’s broader CI/CD compliance patterns (API gates, feature-flag evidence, PQC gates).

- Lock the process into your SDLC policy and make “update

data-classification.yml” a standard acceptance criterion for new features.

By the end of 90 days, you should be able to answer an auditor’s “Where is cardholder data and how is it protected?” with Git-backed configs and CI logs, not guesswork.

If you’d like to extend this pattern, your next steps could be:

- Adding OPA/Rego or similar policy engines around Data Classification as Code, like in Cyber Rely’s CI gates for API security guides.

- Pulling in Pentest Testing’s Website Vulnerability Scanner, Risk Assessment Services, and Remediation Services as default tools in your classification-driven pipelines.

🔐 Frequently Asked Questions (FAQs)

Find answers to commonly asked questions about Data Classification as Code.