Ingress2Gateway 1.0: Migration Guardrails for Teams Leaving Ingress-NGINX Behind

Ingress2Gateway migration is no longer a “later” platform task. It is now a production-safety task.

On March 20, 2026, Kubernetes announced Ingress2Gateway 1.0 as a stable migration assistant for moving from Ingress to Gateway API. That release matters because Kubernetes had already made the bigger platform reality explicit: ingress-nginx retirement means no more releases, no more bug fixes, and no more security updates after March 2026. For teams still running customer-facing routing, auth, and TLS through ingress-nginx, the question is no longer whether to plan a move. It is how to migrate without quietly breaking production behavior.

That is exactly where many migrations go wrong. The YAML conversion looks successful. The manifests apply. Traffic flows. But login redirects start looping, a case-insensitive regex stops matching, a timeout changes behavior, a body-size default breaks uploads, or TLS redirect logic shifts in ways nobody tested. Kubernetes’ own migration guidance is clear that Gateway API is the successor to Ingress, but it also warns that Ingress relied heavily on annotations, had portability problems, and was not well-suited to multi-team shared infrastructure.

We are already publishing practical engineering-first guidance on this exact area through its Cyber Rely blog, our existing post on Ingress-NGINX Retirement: 7 Gateway API Guardrails, and its related CVE-2026-3288 Ingress-NGINX Upgrade Guide. This article goes one step deeper on one theme: migration safety.

Why migration is urgent now

Ingress-NGINX retirement is not just a maintenance milestone. It is a control-plane risk milestone.

Kubernetes states that ingress-nginx maintenance halts in March 2026 and that, after retirement, there will be no further releases or fixes for newly discovered vulnerabilities. At the same time, Kubernetes is explicitly steering users toward Gateway API and now offers Ingress2Gateway 1.0 as a stable, tested migration assistant. In that 1.0 release, the project added support for more than 30 common ingress-nginx annotations and backs supported behavior with controller-level integration tests that compare runtime outcomes, not just YAML output.

That is encouraging, but it should not be misunderstood.

Ingress2Gateway is a migration assistant, not a permission slip to skip validation. Kubernetes’ own guidance says to review generated output and logs carefully, verify behavior in a development cluster, double-check body-size and timeout defaults, then deploy Gateway API alongside existing Ingress and shift traffic gradually with weighted DNS, a cloud load balancer, or platform traffic-splitting.

So the practical question for platform teams is this:

How do we turn Ingress2Gateway migration into a controlled engineering change instead of a routing gamble?

Inventory ingress dependencies before you convert anything

The first guardrail is simple: inventory behavior before you translate it.

Many teams think they are migrating “Ingress objects.” In reality, they are migrating a stack of coupled behaviors:

- host and path routing

- regex and rewrite handling

- TLS termination and redirects

- auth subrequests and sign-in flows

- header injection and forwarding

- source IP trust

- body-size limits and timeout assumptions

- controller-wide defaults

- snippets, ConfigMaps, and implementation-specific annotations

Our existing ingress-nginx retirement article makes the same point clearly: the visible Ingress object is often only half the behavior, while the other half lives in annotations, controller defaults, and forgotten assumptions in application teams.

Start with a fast controller inventory:

kubectl get pods --all-namespaces \

--selector app.kubernetes.io/name=ingress-nginxKubernetes specifically calls out that selector as the quickest way to confirm whether ingress-nginx is present.

Then enumerate controllers and versions across every cluster context:

for ctx in $(kubectl config get-contexts -o name); do

echo "### Context: $ctx"

kubectl --context "$ctx" get pods -A \

-l app.kubernetes.io/name=ingress-nginx \

-o custom-columns='NAMESPACE:.metadata.namespace,POD:.metadata.name,IMAGE:.spec.containers[*].image'

echo

doneThen inventory Ingress resources and the annotations most likely to create migration drift:

kubectl get ingress -A -o yaml | grep -E \

'nginx.ingress.kubernetes.io/(rewrite-target|use-regex|auth-url|auth-signin|configuration-snippet|server-snippet|proxy-body-size|proxy-read-timeout|proxy-send-timeout|enable-cors)'And capture the effective pre-migration state, not just the source manifests:

helm list -A | grep ingress-nginx

helm get values -n ingress-nginx <release-name> > ingress-values-before.yaml

helm get manifest -n ingress-nginx <release-name> > ingress-manifest-before.yamlThat baseline matters because Kubernetes warns that some behaviors do not translate cleanly. Ingress2Gateway can emit warnings for unsupported annotations, best-effort timeout translations, body-size differences, URL normalization gaps, and implementation-specific behavior you must check manually.

Treat auth migration as a first-class workstream

Auth is where many “successful” Ingress2Gateway migrations fail.

In our recent post on the ingress-nginx migration, we already warned that one of the most dangerous assumptions is treating auth as if it lives “inside ingress.” In real deployments, auth often depends on external auth URLs, sign-in redirects, forwarded headers, oauth2-proxy, or custom header behavior expressed through ingress-nginx annotations. Gateway API can cleanly represent route structure, but auth frequently moves into implementation-specific policy objects, adjacent proxies, or service-level controls.

Before you convert anything, document:

- which routes are public

- which routes require session auth

- which routes require token auth

- which endpoints intentionally redirect to sign-in

- which headers upstream services expect after successful auth

- which error codes must be preserved for denied or expired sessions

A minimal continuity test matrix should include:

# anonymous request should redirect to sign-in

curl -isk https://app.example.com/admin

# authenticated browser/session flow should return 200

curl -isk -b session.txt https://app.example.com/admin

# invalid token should remain a 401 or 403, not a silent redirect

curl -isk -H "Authorization: Bearer invalid" https://api.example.com/v1/me

# callback/login endpoints should still preserve original target

curl -isk "https://app.example.com/login?next=/billing"Also test upstream headers explicitly:

curl -isk https://app.example.com/admin \

-H "X-Debug-Test: auth-check"Then validate in the application or upstream proxy that expected identity headers still arrive:

X-Forwarded-UserX-Forwarded-EmailX-Auth-Request-UserX-Forwarded-ProtoX-Forwarded-Host

Do not assume parity. Prove parity.

Rebuild routing behavior intentionally

Routing migration is where “looks right” often becomes “works differently.”

Kubernetes’ February migration guidance highlights several surprising ingress-nginx behaviors that can break production after translation:

- regex matching can behave differently than you expect

use-regexcan affect all paths on a host- rewrite-target behavior can imply regex-style handling

- trailing-slash redirect behavior may disappear unless you add it explicitly

- path normalization can differ across implementations

This is exactly why Ingress2Gateway’s output must be reviewed, not rubber-stamped.

A safe flow looks like this:

# From manifest files

ingress2gateway print \

--input-file ingress.yaml,ingress-extra.yaml \

--providers=ingress-nginx > gwapi.yaml

# Or from a namespace

ingress2gateway print \

--namespace production-apps \

--providers=ingress-nginx > gwapi.yaml

# Or cluster-wide

ingress2gateway print \

--providers=ingress-nginx \

--all-namespaces > gwapi.yamlKubernetes documents all three patterns and also notes that you can use emitters for implementation-specific extensions where needed.

Then inspect the warnings. If the generated output tells you:

- an annotation is unsupported

- a timeout was translated on a best-effort basis

- a body-size setting was not mapped

- regex matching was made case-insensitive

- URL normalization may differ

that is not “tool noise.” That is your migration backlog.

A practical routing validation pack should include:

# exact path

curl -isk https://app.example.com/health

# prefix path

curl -isk https://app.example.com/api/v1/orders

# case-sensitive path trap

curl -isk https://app.example.com/Headers

curl -isk https://app.example.com/headers

# rewritten route

curl -isk https://app.example.com/ip

# regex route

curl -isk https://app.example.com/users/123

# trailing slash behavior

curl -isk https://app.example.com/my-path

curl -isk https://app.example.com/my-path/If your current platform relies on case-insensitive regex or rewrite quirks, write explicit tests for those before the cutover. Kubernetes shows that a route that worked under ingress-nginx can return 404 under Gateway API if you do not account for those differences.

Treat TLS migration as certificate behavior, not just secret attachment

TLS migration is not finished because a Secret reference exists in a Gateway listener.

Kubernetes’ migration guidance notes that the Ingress API had only limited features and leaned on annotations for many advanced behaviors, while Gateway API makes listeners, entry points, and TLS ownership more explicit. That is a benefit, but it also means responsibilities often shift between developer, application admin, and cluster operator.

Your TLS migration checklist should include:

- certificate reference and namespace validation

- hostname coverage and SAN review

- HTTP-to-HTTPS redirect behavior

- HSTS preservation

- SNI behavior across multi-host listeners

- issuer and renewal workflow continuity

- TLS policy differences between old and new controllers

Minimal TLS checks:

# certificate + redirect chain

curl -IL http://app.example.com

# verify TLS handshake + host

openssl s_client -connect app.example.com:443 -servername app.example.com </dev/null

# inspect response headers

curl -isk https://app.example.com | grep -Ei 'strict-transport-security|location|server'Kubernetes also notes that Ingress2Gateway may create an HTTP listener and redirect route to preserve ingress-nginx’s default HTTP-to-HTTPS behavior. That may be correct for your environment, or it may not be. Review it intentionally.

Re-test timeouts, body size, headers, and client IP trust

This is where many production incidents hide.

Ingress2Gateway 1.0 can translate some ingress-nginx annotations, but Kubernetes explicitly shows that others do not map perfectly. In its own example, proxy-read-timeout and proxy-send-timeout are only best-effort translated, proxy-body-size has no standard Gateway API equivalent, and URL normalization remains implementation-specific. Kubernetes then tells teams to verify body-size defaults and confirm that timeouts are appropriate in a development cluster before rolling traffic.

That means you should test:

- large uploads

- slow upstream responses

- websocket or streaming behavior if relevant

- forwarded header handling

- source IP trust and WAF/rate-limit interactions

- upstream logic tied to host or proto headers

Example checks:

# body-size validation

curl -isk -F "file=@./sample-50mb.bin" https://app.example.com/upload

# timeout validation

time curl -isk https://api.example.com/slow-endpoint

# header continuity

curl -isk https://app.example.com/debug/headers

# client IP path

curl -isk https://app.example.com/debug/ipIf you have rate limiting or IP-based auth decisions, capture before/after behaviour during controlled test traffic. This is also a strong stage to review our related post, 9 Powerful Infrastructure as Code Security Guardrails, so migration checks become part of the delivery pipeline instead of a one-time spreadsheet exercise.

Roll out in parallel, then shift traffic gradually

Do not turn translation success into immediate replacement.

Kubernetes’ recommended rollout sequence is straightforward:

- generate Gateway API manifests

- test them thoroughly in a development cluster

- deploy Gateway API alongside the existing Ingress

- gradually shift traffic using weighted DNS, a cloud load balancer, or platform traffic splitting

- remove the old Ingress only after behavior is proven

That is the right model because it gives you a real rollback path.

A safe cutover checklist:

- old ingress-nginx controller still running

- new Gateway controller live in parallel

- mirrored or staged synthetic tests green

- auth continuity tests green

- upload and timeout tests green

- key routes compared under canary traffic

- observability dashboards ready

- rollback owner named

- rollback commands tested, not imagined

A simple rollout gate can look like this:

#!/usr/bin/env bash

set -euo pipefail

checks=(

"curl -fsS https://canary.example.com/health >/dev/null"

"curl -fsS https://canary.example.com/login >/dev/null"

"curl -fsS https://canary.example.com/api/v1/me -H 'Authorization: Bearer testtoken' >/dev/null"

)

for check in "${checks[@]}"; do

echo "[RUN] $check"

eval "$check"

done

echo "[OK] canary verification passed"Preserve rollback evidence before you need it

Rollback is not a sentence in a change ticket. It is a tested recovery path with preserved evidence.

Kubernetes’ upgrade and migration guidance stresses keeping the last known-good manifests and values, preserving logs and configuration state if compromise is suspected, and reviewing generated output rather than trusting defaults. Our ingress-nginx retirement article also emphasizes preserving effective ingress-nginx config, rollout data, and evidence before touching production.

Keep these artifacts before cutover:

- ingress manifests

- Gateway manifests

- controller deployment specs

- Helm history

- current TLS Secret names and issuers

- generated nginx config if accessible

- routing test outputs

- access logs and auth logs

- canary window timestamps

- rollback commands and prior image tags

Example rollback helpers:

helm history -n ingress-nginx <release-name>

helm rollback -n ingress-nginx <release-name> <previous-revision>

kubectl -n ingress-nginx rollout status deploy/<controller-deployment-name>If there is even a small chance that a routing or auth issue overlaps with active abuse, preserve logs and config state before you “clean up.”

When external validation is worth it

Some migrations should not rely only on internal smoke tests.

External validation is usually worth it when:

- auth depends on oauth2-proxy, external auth, or custom headers

- regulated traffic depends on strict TLS and traceability

- client IP trust affects rate limiting, fraud, or access controls

- the ingress layer is shared across many services or teams

- there are custom snippets or controller-wide overrides

- the business impact of a subtle routing break is high

This is an inference from the risk patterns highlighted by Kubernetes and from our own migration guidance: the risk is not just “is the YAML valid?” but “did auth, routing, TLS, headers, timeouts, and rollback behavior stay correct under realistic traffic?”

For teams that want independent assurance before cutover, this is the point to bring in hands-on validation against production-like behavior, not just static review.

Where Cyber Rely fits naturally

If your migration backlog now includes route validation, auth continuity, TLS behavior checks, rollout controls, and evidence-ready testing, that is where Cyber Rely’s security services fit naturally.

For application-edge testing, Cyber Rely’s Web Application Penetration Testing Services and API Penetration Testing Services are the most relevant. We align well with the exact migration risks teams need to test: auth flows, exposed headers, API behavior, business-logic regressions, and security misconfigurations introduced during change.

And if you want more engineering-focused guidance before or during rollout, we already have related reading worth keeping open in adjacent tabs:

- Ingress-NGINX Retirement: 7 Gateway API Guardrails

- CVE-2026-3288 Ingress-NGINX Upgrade Guide

- 9 Powerful Infrastructure as Code Security Guardrails

Free Website Vulnerability Scanner tool by Pentest Testing Corp

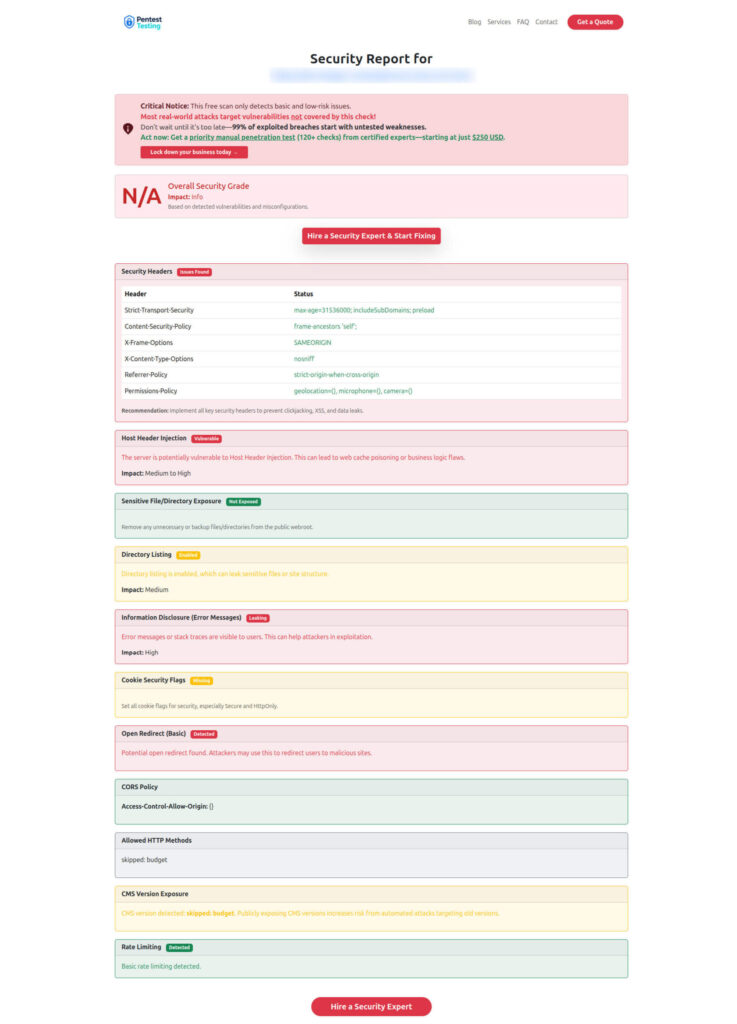

Sample report by the tool to check Website Vulnerability

Final thought

Ingress2Gateway migration should be treated as a behavior-preservation project, not a manifest-conversion project.

Ingress2Gateway 1.0 gives teams a far stronger starting point than earlier migration attempts, and Kubernetes has made the direction of travel unmistakable. But the safest teams will still do the harder work: inventory hidden ingress dependencies, separate auth from routing, rebuild regex and rewrite logic deliberately, test TLS and header continuity, roll out in parallel, and preserve rollback evidence before production traffic moves.

Validate your migration path before you ship routing or auth regressions.

Use the patterns above, review related guidance on the Cyber Rely blog, and route high-risk migrations through a formal validation plan before you cut traffic.

🔐 Frequently Asked Questions (FAQs)

Find answers to commonly asked questions about Ingress2Gateway Migration.