Ingress-NGINX Rewrite Injection (CVE-2026-3288): Safe Upgrade and Validation Playbook for Engineering Teams

Kubernetes disclosed CVE-2026-3288 on March 9, 2026. The issue affects ingress-nginx when the nginx.ingress.kubernetes.io/rewrite-target annotation can be used to inject configuration into nginx, creating risk of code execution in the controller context and disclosure of Secrets the controller can access. The advisory lists affected versions as ingress-nginx earlier than 1.13.8, 1.14.4, and 1.15.0, with fixed versions at 1.13.8, 1.14.4, and 1.15.0.

For engineering teams, this is not just a patching task. It is a rollout-control task. You need to answer five questions quickly: Are we exposed, where are rewrites used, what is the safest upgrade path, what behavior must be regression-tested after patching, and what evidence must be preserved if exploitation is suspected?

This playbook is built for developers, DevOps teams, platform engineers, CTOs, and product security teams who need to move fast without breaking auth, routing, TLS, or incident evidence.

What the advisory says and which ingress-nginx versions are affected

The Kubernetes advisory is clear on four points:

- If you do not run ingress-nginx, you are not affected.

- The vulnerable behavior is tied to the

rewrite-targetannotation. - Admission control can be used as a temporary mitigation before upgrade by blocking use of that annotation.

- Suspicious data in the

rules.http.paths.pathfield of an Ingress object may indicate attempted exploitation.

That means the right first move is not “upgrade everything blindly.” The right first move is to identify exactly where ingress-nginx exists, where rewrite behavior is used, and which environments can be upgraded first without creating customer-facing routing regressions.

How to inventory exposure across clusters and environments quickly

Start by identifying every cluster and namespace that runs ingress-nginx.

kubectl get pods --all-namespaces \

--selector app.kubernetes.io/name=ingress-nginxThat exact selector is called out by the Kubernetes advisory as the quickest check for whether ingress-nginx is present.

Then inventory controller images across all contexts:

for ctx in $(kubectl config get-contexts -o name); do

echo "### Context: $ctx"

kubectl --context "$ctx" get pods -A \

-l app.kubernetes.io/name=ingress-nginx \

-o custom-columns='NAMESPACE:.metadata.namespace,POD:.metadata.name,IMAGE:.spec.containers[*].image'

echo

doneNext, find every Ingress that uses the vulnerable annotation:

kubectl get ingress -A -o json | jq -r '

.items[]

| select(.metadata.annotations["nginx.ingress.kubernetes.io/rewrite-target"] != null)

| {

namespace: .metadata.namespace,

ingress: .metadata.name,

rewrite_target: .metadata.annotations["nginx.ingress.kubernetes.io/rewrite-target"],

paths: [ .spec.rules[]?.http.paths[]?.path ]

}'Also snapshot Ingress objects whose paths deserve extra review:

kubectl get ingress -A -o json | jq -r '

.items[]

| {

namespace: .metadata.namespace,

ingress: .metadata.name,

paths: [ .spec.rules[]?.http.paths[]?.path ]

}'For a fast engineering triage, classify what you find into four buckets:

- Internet-facing production ingress

- Internal-only ingress

- Staging or pre-production ingress

- Dormant or legacy ingress definitions

That separation matters. Internet-facing production controllers usually get the fastest patch path, but staging is where you prove rewrite, auth, and header behavior before touching prod.

Capture the baseline before you change anything

Before upgrades, export the current ingress state:

kubectl get ingress,ingressclass,configmap,service,deployment,replicaset,pod -A -o yaml > ingress-baseline.yaml

kubectl get validatingwebhookconfiguration,mutatingwebhookconfiguration -o yaml > webhook-baseline.yaml

kubectl get events -A --sort-by=.lastTimestamp > cluster-events-baseline.txtIf you use Helm, capture the release state too:

helm list -A | grep ingress-nginx

helm get values -n ingress-nginx <release-name> > ingress-values-before.yaml

helm get manifest -n ingress-nginx <release-name> > ingress-manifest-before.yamlAnd if you can safely access a controller pod, keep a copy of the generated nginx config for later diffing:

POD=$(kubectl -n ingress-nginx get pod -l app.kubernetes.io/name=ingress-nginx -o jsonpath='{.items[0].metadata.name}')

kubectl -n ingress-nginx exec "$POD" -- nginx -T > nginx-effective-before.txtSafe upgrade sequencing, rollback planning, and config-diff validation

The safest upgrade pattern is:

- Freeze risky ingress changes

- Block new

rewrite-targetusage at admission if you cannot upgrade immediately - Upgrade a lower environment first

- Diff effective config before and after

- Run validation tests against rewrites, auth, headers, TLS, and rate limits

- Promote to production with a tested rollback path

Temporary mitigation before upgrade

The Kubernetes advisory explicitly recommends admission control to block rewrite-target before you upgrade.

A practical Kyverno example:

apiVersion: kyverno.io/v1

kind: ClusterPolicy

metadata:

name: block-ingress-nginx-rewrite-target

spec:

validationFailureAction: Enforce

background: true

rules:

- name: deny-rewrite-target

match:

any:

- resources:

kinds:

- Ingress

validate:

message: "rewrite-target annotation is temporarily blocked pending remediation."

deny:

conditions:

any:

- key: "{{ request.object.metadata.annotations.\"nginx.ingress.kubernetes.io/rewrite-target\" || '' }}"

operator: NotEquals

value: ""Choose the least disruptive fixed version

Move to the nearest supported fixed version for your deployment track:

- 1.13.8

- 1.14.4

- 1.15.0 or newer

Do not mix “controller image fixed” with “everything else good” as an assumption. Your real risk is behavioral drift after the rollout.

Example staged upgrade flow

# 1) pause non-essential ingress changes

kubectl label ns ingress-nginx change-freeze=true --overwrite

# 2) backup current revision info

helm history -n ingress-nginx <release-name>

# 3) upgrade in staging first

helm upgrade <release-name> ingress-nginx/ingress-nginx \

-n ingress-nginx \

-f ingress-values-before.yaml \

--set controller.image.tag=v1.15.0

# 4) wait for rollout

kubectl -n ingress-nginx rollout status deploy/<controller-deployment-name>

# 5) capture post-upgrade generated config

POD=$(kubectl -n ingress-nginx get pod -l app.kubernetes.io/name=ingress-nginx -o jsonpath='{.items[0].metadata.name}')

kubectl -n ingress-nginx exec "$POD" -- nginx -T > nginx-effective-after.txt

# 6) diff effective config

diff -u nginx-effective-before.txt nginx-effective-after.txt | lessWhat to diff on purpose

Do not skim the diff. Review it specifically for:

locationblocksrewritedirectives- auth subrequests such as

auth_request - forwarded headers such as

X-Forwarded-* real_iphandling- redirect behavior

- timeout values

- body-size limits

- rate-limiting directives

If your team cannot explain those diffs clearly, do not promote yet.

Rollback planning that actually works

A real rollback plan is not “helm rollback if needed.” It is a tested sequence:

- keep the last known-good values and manifest exports

- keep the prior controller image available

- know who approves rollback

- know which synthetic checks must fail before rollback is triggered

- preserve evidence before rollback if there is any chance of exploitation

Minimal rollback example:

helm history -n ingress-nginx <release-name>

helm rollback -n ingress-nginx <release-name> <previous-revision>

kubectl -n ingress-nginx rollout status deploy/<controller-deployment-name>If compromise is even slightly suspected, collect logs and configuration state before reverting. A rollback that destroys the timeline may make incident scoping much harder later.

What to test after patching: rewrites, auth, path handling, headers, TLS, rate limits

This is where most teams lose confidence. The controller is patched, but login breaks, path normalization changes, or header logic shifts.

Run validation in a disciplined matrix.

1) Rewrite behavior

Test all of the following:

- canonical routes

- trailing slash behavior

- double slashes

- encoded slashes

- regex-based paths

- legacy client paths that depend on rewrite assumptions

Example:

BASE="https://app.example.com"

curl -skI "$BASE/legacy"

curl -skI "$BASE/legacy/"

curl -skI "$BASE/legacy//health"

curl -skI "$BASE/legacy/%2Fhealth"

curl -skI "$BASE/legacy/api/v1/orders"You are looking for unexpected 301/302/404/503 changes, wrong upstream routing, or auth bypass/failure caused by a path mismatch.

2) Authentication and authorization flow

Retest:

- unauthenticated access to protected endpoints

- authenticated access with normal roles

- expired token behavior

- SSO callback paths

- API gateway or sidecar auth integrations

- tenant or role-scoped routes

Example:

TOKEN="<paste-test-token>"

curl -skI "$BASE/protected"

curl -skI -H "Authorization: Bearer $TOKEN" "$BASE/protected"

curl -skI "$BASE/oauth/callback?code=test"If your platform uses auth_request, external auth services, or identity-aware proxy logic, test those flows explicitly. Do not assume path rewrites preserved the same protection model.

3) Header handling and client IP trust

Recheck:

X-Forwarded-ForX-Forwarded-Proto- host preservation

- upstream app logic that depends on original client IP

- security headers returned to the browser

Example:

curl -skI "$BASE/login"

curl -skI -H "X-Forwarded-Proto: https" "$BASE/login"

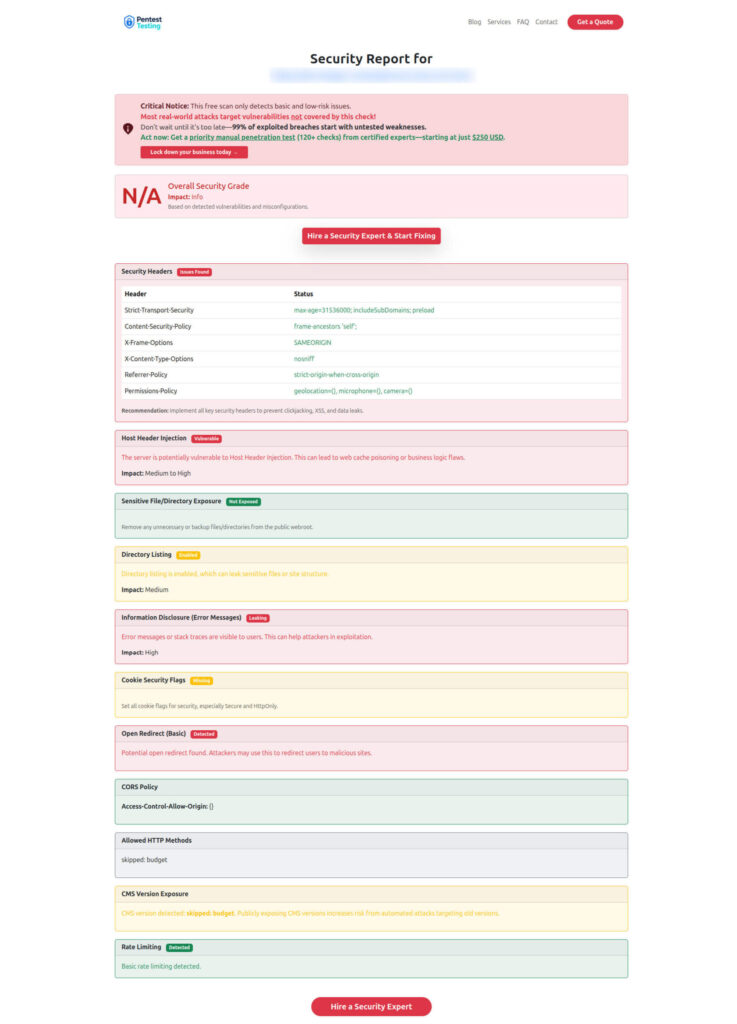

curl -skI -H "Host: app.example.com" "$BASE/"This is also a good point to run a quick external hygiene check through the Free Website Vulnerability Scanner. The tool page says it checks common vulnerabilities, HTTP header issues, exposed files, weak cookie settings, open redirects, directory listing, and other basic exposure indicators, and that the light scan requires no signup.

Screenshot the Free Website Vulnerability Scanner tool page:

4) TLS and redirect behavior

Validate:

- certificate chain

- SNI behavior

- expected redirect to HTTPS

- HSTS presence if you rely on it

- mTLS or upstream TLS behavior where applicable

Example:

openssl s_client -connect app.example.com:443 -servername app.example.com </dev/null

curl -skI http://app.example.com/

curl -skI https://app.example.com/5) Rate limits, body size, and timeout behavior

Re-test any endpoints that depend on:

- rate limiting

- request buffering

- upload size

- long-lived requests

- websocket or SSE behavior

Example:

seq 1 50 | xargs -I{} -P10 curl -sk -o /dev/null -w "%{http_code}\n" "$BASE/api/health" | sort | uniq -cAnd for upload-sensitive routes:

curl -sk -F "[email protected]" "$BASE/upload"6) Application smoke tests with expected outputs

Best practice is to keep a small validation file that defines:

- request

- expected upstream

- expected status

- expected redirect

- expected auth outcome

That turns post-patch validation from a meeting into a repeatable release artifact.

Logging and telemetry to preserve in case exploitation is suspected

The advisory notes that suspicious data inside rules.http.paths.path may indicate exploitation attempts.

If you suspect abuse, preserve evidence before broad cleanup.

Minimum evidence set

Export and preserve:

- all affected Ingress manifests and annotations

- controller Deployment, ReplicaSet, Pod, Service, IngressClass, and ConfigMap state

- controller image tags and rollout history

- ingress-nginx controller logs

- Kubernetes audit logs for Ingress, ConfigMap, webhook, and RBAC changes

- admission-controller logs

- load balancer, WAF, CDN, and reverse-proxy logs

- identity-provider logs for admin or deployment access

- cert-manager events if TLS behavior changed

- CI/CD logs for who changed manifests and when

Example collection commands:

kubectl get ingress,ingressclass,configmap,service,deployment,replicaset,pod -A -o yaml > ingress-incident-snapshot.yaml

kubectl get validatingwebhookconfiguration,mutatingwebhookconfiguration -o yaml > webhook-incident-snapshot.yaml

kubectl logs -n ingress-nginx deploy/<controller-deployment-name> --since=24h > ingress-controller-24h.log

kubectl get events -A --sort-by=.lastTimestamp > cluster-events-incident.txtIf you have cluster audit logging enabled, preserve those records immediately. If the controller had broad Secret access, that changes the impact question significantly because the advisory and NVD both note that default installations may expose Secrets accessible to the controller.

Preserve evidence before “cleaning up”

A good rule is simple:

- snapshot first

- hash exported evidence

- document timestamps in UTC

- only then contain, rotate, or revert

If your team needs a ready-made evidence-first investigation workflow, the Pentest Testing Corp article 7 Proven Digital Forensic Analysis Steps for Legal Evidence is a useful internal companion, and the service page for Digital Forensic Analysis Services emphasizes evidence preservation, timeline reconstruction, and containment support.

Longer-term hardening: policy, review gates, and migration guardrails

This advisory should trigger more than a one-time upgrade.

1) Treat risky ingress annotations as governed configuration

For production ingress changes, require:

- code review from platform + security owners

- policy checks on annotations

- explicit approval for rewrite logic

- regression tests for auth and routing before promotion

2) Reduce who can change ingress objects

Not every namespace contributor should be able to modify internet-facing routing behavior. Restrict create/update access for Ingress objects, especially in shared clusters.

3) Reduce controller blast radius

If practical:

- separate public and internal controllers

- narrow namespace scope

- review Secret access and RBAC

- isolate sensitive workloads from shared ingress planes

4) Build release gates around behavior, not just version numbers

A “patched” environment can still be broken if:

- auth callbacks route differently

- headers change

- timeout behavior changes

- rewrite semantics shift

- upstream apps depend on previous path normalization

So bake validation into CI/CD and release approval.

5) Use Gateway API migration guardrails deliberately

If this incident pushes your platform team toward broader ingress modernization, do not translate manifests mechanically. Keep migration guardrails around auth behavior, rewrite semantics, client IP trust, TLS handling, and rollback testing. Cyber Rely already has a related internal post, Ingress-NGINX Retirement: 7 Gateway API Guardrails, that is useful for the longer-term migration track.

Where Cyber Rely and Pentest Testing Corp fit

If your team needs an engineering-led second opinion after upgrade, use Cyber Rely and the Cyber Rely blog for advisory-driven platform guidance, and bring in Pentest Testing Corp where you need structured validation, remediation, or incident evidence support. The verified internal service pages include Risk Assessment Services, Remediation Services, Digital Forensic Analysis Services, and the Free Website Vulnerability Scanner.

This becomes especially useful when you need outside confidence that:

- auth and routing behavior stayed intact after the controller upgrade

- ingress changes did not create fresh edge exposure

- evidence was preserved correctly if suspicious activity appeared before or during rollout

Sample report from the tool to check Website Vulnerability:

Audit your clusters for affected ingress-nginx versions, validate your upgrade path, and use external testing if you need confidence that auth and routing behavior stayed intact.

🔐 Frequently Asked Questions (FAQs)

Find answers to commonly asked questions about CVE-2026-3288 Ingress-NGINX.