7 Powerful Secure Observability Pipeline Controls (Trusted Logs, Traces & Metrics)

Modern engineering teams built observability to answer: “Is the service up?”

Security teams need observability to answer: “What happened, who did it, and can we prove it?”

That gap is why secure observability matters. If your detection depends on telemetry, your telemetry becomes a security boundary—just like auth, secrets, and CI/CD. A secure observability pipeline is one you can trust during an incident: events are attributable, complete enough, time-consistent, and resistant to tampering.

In this guide, you’ll ship an engineering observability pipeline that produces trusted logs and traces for detection, reduces alert noise, and makes post-incident metrics and forensics fast.

Secure Deployments Guardrails: Forensics-Ready CI/CD

A practical guide to secure deployments with forensics-ready CI/CD, audit-grade deployment logs, canary security metrics, and risk-scoring gates.

https://www.cybersrely.com/secure-deployments-guardrails-forensics-ready/

What makes an observability pipeline trustworthy for security?

A security-grade telemetry pipeline should satisfy these properties:

- Authenticity — you can validate who/what emitted the data (workload identity, client cert, signed tokens).

- Integrity — events can’t be silently modified (tamper-evident hashing, immutability controls, strict access).

- Completeness (enough for forensics) — critical events aren’t dropped, sampling is policy-driven, and audit streams are preserved.

- Consistency — stable schemas, consistent timestamps, normalized clocks, and reliable correlation identifiers.

- Attribution — events are linked to actor, tenant, role, and request context (without leaking secrets/PII).

- Correlation — you can join logs ↔ traces ↔ metrics ↔ auth/audit events across services.

- Resilience under attack — rate limits, backpressure, queueing, and “fail-safe” behaviors prevent telemetry blackouts.

The “Trust Score” checklist (add these as SLOs)

Track these as first-class telemetry security KPIs:

- Span drop rate per service (collector/exporter errors, queue overflow)

- Clock skew distribution (p50/p95 drift vs trusted time)

- Schema compliance rate (required fields present)

- Unauthenticated ingestion attempts (blocked)

- Cardinality budget breaches (tag explosion can become a DoS)

- Audit event coverage for critical actions (login, privilege change, export, secret/config change)

Common pipeline gaps (and why they break detection)

1) Missing context

Security alerts without tenant_id, actor_id, auth decision, and request_id/trace_id turn into time-wasting investigations.

2) Dropped spans and partial traces

Sampling decisions or collector overload can cut out the exact service hop where auth failed or a privilege changed.

3) Misaligned clocks

If service clocks drift, your timeline reconstruction becomes guesswork—especially across distributed traces and async jobs.

Control #1 — Define a telemetry contract (schema + required fields)

Start by enforcing a shared schema for trusted logs and traces. Treat it like an API contract: versioned, validated, and tested.

Minimal security-grade event fields (recommended)

timestamp(UTC, RFC3339 or epoch ms)service.name,service.version,deployment.environmenttrace_id,span_id,request_idtenant_id(ororg_id)actor.type(user/service),actor.id(stable ID),actor.roleauth.decision(allow/deny),auth.policy(name/version),auth.reason(short code)event.type(e.g.,authz.denied,data.export,admin.role_change)resource.type,resource.id(object acted upon)source.ip(if appropriate),user_agent(if appropriate)- Never log raw tokens, cookies, or secrets

Example: JSON log event (security-focused)

{

"timestamp": "2026-02-26T10:22:31.442Z",

"severity": "INFO",

"service": { "name": "billing-api", "version": "1.18.3", "env": "prod" },

"trace_id": "4bf92f3577b34da6a3ce929d0e0e4736",

"span_id": "00f067aa0ba902b7",

"request_id": "req_7c9d2f8f",

"tenant_id": "t_1029",

"actor": { "type": "user", "id": "u_88421", "role": "admin" },

"event": { "type": "authz.denied", "category": "security" },

"auth": { "decision": "deny", "policy": "rbac-v3", "reason": "MISSING_SCOPE" },

"resource": { "type": "invoice", "id": "inv_55109" },

"http": { "method": "GET", "route": "/v1/invoices/{id}", "status": 403 }

}Control #2 — Enrich events at the edge (where context is richest)

Your gateway/edge is the best place to ensure:

request_idexiststrace_idis created (or accepted from trusted upstream)- tenant and actor context is attached (after auth)

- “security events” are emitted for key actions

Node.js (Express): request_id + trace correlation + safe tags

import crypto from "crypto";

export function edgeContext(req, res, next) {

const requestId = req.header("X-Request-Id") || `req_${crypto.randomUUID()}`;

res.setHeader("X-Request-Id", requestId);

// Example: attach stable IDs from your auth middleware (not raw JWT)

req.sec = {

request_id: requestId,

tenant_id: req.user?.tenantId,

actor_id: req.user?.id,

actor_role: req.user?.role

};

next();

}Emit a security audit event (do this for high-signal actions)

export function audit(event, ctx) {

// Keep stable IDs, redact by default

console.log(JSON.stringify({

timestamp: new Date().toISOString(),

severity: "INFO",

event: { type: event.type, category: "security" },

request_id: ctx.request_id,

tenant_id: ctx.tenant_id,

actor: { type: "user", id: ctx.actor_id, role: ctx.actor_role },

resource: event.resource,

auth: event.auth

}));

}

// Example usage after an authZ decision:

audit(

{

type: "data.export",

resource: { type: "customer_export", id: "exp_9012" },

auth: { decision: "allow", policy: "rbac-v3", reason: "SCOPE_OK" }

},

req.sec

);Control #3 — Identity propagation across services (so traces mean something)

Distributed tracing is only security-useful when you can correlate a trace to:

- the workload identity (service account / workload identity)

- the actor identity (user or machine)

- the auth decision and policy version

Python (FastAPI): add identity + trace fields to logs

from fastapi import FastAPI, Request

import time, uuid, json, datetime

app = FastAPI()

@app.middleware("http")

async def add_security_context(request: Request, call_next):

request_id = request.headers.get("x-request-id") or f"req_{uuid.uuid4()}"

request.state.request_id = request_id

# Example: populate from your auth layer (never log raw tokens)

request.state.tenant_id = request.headers.get("x-tenant-id")

request.state.actor_id = request.headers.get("x-actor-id")

start = time.time()

response = await call_next(request)

dur_ms = int((time.time() - start) * 1000)

print(json.dumps({

"timestamp": datetime.datetime.utcnow().isoformat() + "Z",

"severity": "INFO",

"event": {"type": "http.request"},

"request_id": request_id,

"tenant_id": request.state.tenant_id,

"actor": {"id": request.state.actor_id},

"http": {"path": str(request.url.path), "status": response.status_code, "duration_ms": dur_ms}

}))

response.headers["X-Request-Id"] = request_id

return responseRecommendation: separate “auth/audit events” from general logs

General app logs get sampled, rotated, or throttled. Audit streams should be durable by design.

Control #4 — Consistent timestamps (fix clock drift before it ruins forensics)

Rule: Use UTC everywhere. Store event time as timestamp and capture ingestion time separately.

Add drift detection to your pipeline

Emit a metric: telemetry_clock_skew_ms (source timestamp vs collector receipt time). Alert when skew exceeds your tolerance.

Example: simple skew calc in a collector/processor (pseudocode):

skew_ms = abs(received_time_ms - event.timestamp_ms)

if skew_ms > 2000: mark event.telemetry.skew="high"Control #5 — Secure ingestion (validate sources + rate limit + anti-tamper)

If attackers can inject fake telemetry or silence real telemetry, they can blind detection.

A secure ingestion baseline

- mTLS between workloads → collectors → storage

- Allowlist sources (workload identity, cert SAN, namespace)

- Rate limit per tenant/service to prevent telemetry DoS

- Backpressure queues so spikes don’t drop high-value data

- Access control: write-only for producers, read-only for investigators, limited admin

OpenTelemetry Collector (example config pattern)

receivers:

otlp:

protocols:

grpc:

tls:

cert_file: /etc/certs/collector.crt

key_file: /etc/certs/collector.key

client_ca_file: /etc/certs/ca.crt

http:

endpoint: 0.0.0.0:4318

processors:

memory_limiter:

check_interval: 1s

limit_mib: 1024

batch:

timeout: 2s

send_batch_size: 1024

attributes/security_required_fields:

actions:

- key: deployment.environment

action: upsert

value: "prod"

exporters:

logging:

verbosity: normal

otlp:

endpoint: telemetry-backend:4317

tls:

insecure: false

service:

pipelines:

traces:

receivers: [otlp]

processors: [memory_limiter, attributes/security_required_fields, batch]

exporters: [otlp]

logs:

receivers: [otlp]

processors: [memory_limiter, attributes/security_required_fields, batch]

exporters: [otlp]NGINX rate limiting for ingestion endpoints

limit_req_zone $binary_remote_addr zone=telemetry:10m rate=50r/s;

server {

listen 443 ssl;

location /v1/traces {

limit_req zone=telemetry burst=200 nodelay;

proxy_pass http://otel-collector:4318;

}

}Anti-tamper: make logs tamper-evident (hash chaining)

Tamper-evidence doesn’t have to be fancy. A simple rolling hash chain detects missing/edited events in a sequence.

import hashlib, json

def chain_hash(prev_hash: str, event: dict) -> str:

payload = prev_hash + json.dumps(event, sort_keys=True, separators=(",", ":"))

return hashlib.sha256(payload.encode()).hexdigest()

prev = "0" * 64

events = [

{"id":"e1","timestamp":"2026-02-26T10:00:00Z","type":"auth.login","actor":"u_1"},

{"id":"e2","timestamp":"2026-02-26T10:01:10Z","type":"authz.denied","actor":"u_1"},

]

for e in events:

prev = chain_hash(prev, e)

e["chain_hash"] = prev

print(e["id"], e["chain_hash"])Where to store the chain anchors: put periodic anchor hashes into a restricted, append-only store (or separate audit index) so attackers can’t rewrite history without detection.

Control #6 — Cross-service correlation (link traces to auth events)

Security detection often lives in “auth events” (login, token refresh, MFA changes, role changes). Engineering performance lives in traces. Secure observability means joining them quickly.

Join strategy

- Always emit

trace_idin your auth/audit events after a request has a trace context - Ensure audit events include

tenant_id,actor_id,session_id(stable ID), andauth.policy_version

Example: emit an auth decision event with trace correlation (TypeScript)

type AuthDecision = {

decision: "allow" | "deny";

reason: string;

policy: string;

};

export function emitAuthzEvent(ctx: any, resource: any, auth: AuthDecision) {

console.log(JSON.stringify({

timestamp: new Date().toISOString(),

event: { type: "authz.decision", category: "security" },

trace_id: ctx.traceId,

span_id: ctx.spanId,

request_id: ctx.requestId,

tenant_id: ctx.tenantId,

actor: { id: ctx.actorId, role: ctx.actorRole },

resource,

auth

}));

}Example: correlation query (SQL-style)

-- Find denies that occurred within traces that later succeeded elsewhere (suspicious retries)

SELECT a.tenant_id, a.actor_id, a.trace_id, a.auth_reason, r.http_status

FROM authz_events a

JOIN request_logs r

ON a.trace_id = r.trace_id

WHERE a.auth_decision = 'deny'

AND r.http_status = 200

AND r.route IN ('/v1/export', '/v1/admin/*');Control #7 — Alerting that minimizes noise (but catches true signals)

Most alert fatigue comes from:

- missing context (can’t triage quickly)

- weak thresholds (no baseline)

- alerts that don’t map to an action

Noise-resistant alert patterns

- High-signal security events (page-worthy)

- privilege change

- API key creation/rotation

- auth policy change

- mass export

- admin action outside expected network/device posture

- Behavioral deltas (baseline-aware)

- “deny rate changed 5× vs 7-day baseline”

- “new country/ASN for admin actor”

- “sudden trace sampling drop in one service” (telemetry attack or outage)

- Pipeline health alerts (so you know when detection is blind)

- span drop rate

- exporter queue overflow

- ingestion auth failures spike

Example: simple “deny-rate spike” detector (Python)

def should_alert(current, baseline, factor=5, min_events=50):

if current["total"] < min_events:

return False

baseline_rate = baseline["denies"] / max(baseline["total"], 1)

current_rate = current["denies"] / max(current["total"], 1)

return current_rate > baseline_rate * factor

current = {"denies": 180, "total": 2000}

baseline = {"denies": 40, "total": 2000}

print("ALERT" if should_alert(current, baseline) else "OK")Post-incident forensics: reconstruct sequences from logs & metrics (fast)

When an incident hits, your team needs to answer quickly:

- When did it start?

- Which tenant, actor, and session?

- Which services were involved?

- What changed right before impact?

Forensics-ready storage habits

- Keep audit events in a separate, durable stream

- Enforce retention aligned to business risk and compliance needs

- Restrict deletion and modifications (even by admins)

- Document how to retrieve evidence (runbooks)

Timeline reconstruction script (from JSON logs)

import json, glob

from datetime import datetime

def parse_ts(s):

return datetime.fromisoformat(s.replace("Z","+00:00"))

events = []

for fn in glob.glob("logs/*.jsonl"):

with open(fn, "r") as f:

for line in f:

e = json.loads(line)

if e.get("tenant_id") == "t_1029" and e.get("actor", {}).get("id") == "u_88421":

events.append(e)

events.sort(key=lambda e: parse_ts(e["timestamp"]))

for e in events[:50]:

print(e["timestamp"], e.get("event", {}).get("type"), e.get("resource", {}))If you want an independent, defensible investigation workflow (including evidence handling and reporting), start here:

https://www.pentesttesting.com/digital-forensic-analysis-services/

Where our free tool fits

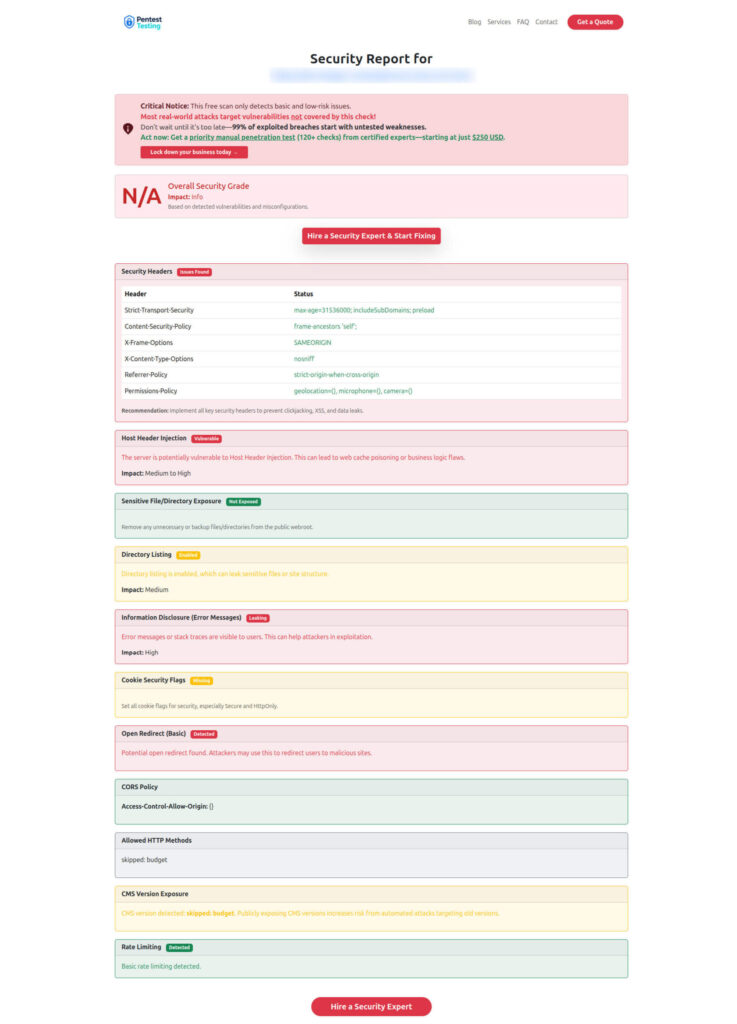

Free Website Vulnerability Scanner Tool Page

Sample Report to check Website Vulnerability

Need a formalized plan and prioritization?

- Risk assessment: https://www.pentesttesting.com/risk-assessment-services/

- Remediation support: https://www.pentesttesting.com/remediation-services/

Recommended reading (recent Cyber Rely posts)

- Forensics-ready telemetry patterns: https://www.cybersrely.com/forensics-ready-telemetry/

- Forensics-ready microservices design patterns: https://www.cybersrely.com/forensics-ready-microservices-design-patterns/

- Security chaos experiments (includes logging/alerting drills): https://www.cybersrely.com/security-chaos-experiments-for-ci-cd/

- Feature flag security controls (runtime control plane hardening): https://www.cybersrely.com/feature-flag-security-safe-canary-rollouts/

- Software supply chain security tactics: https://www.cybersrely.com/software-supply-chain-security-tactics/

What is “secure observability” in simple terms?

Secure observability means your logs, traces, and metrics are reliable enough to support detection and investigations—authentic sources, tamper resistance, consistent timestamps, and strong correlation identifiers.

How do I know if my observability pipeline is trustworthy?

Measure it. Track span drop rate, ingestion auth failures, schema compliance, and clock skew. If you can’t quantify pipeline health, you can’t trust detections built on it.

Should security telemetry be sampled?

General traces can be sampled, but security audit events should not. Keep a durable audit stream for critical actions (auth decisions, privilege changes, exports, config/secret changes).

What are the top 3 fields teams forget (that break investigations)?

tenant_id, actor_id (stable ID), and trace_id/request_id. Without them, you can’t reliably attribute actions or reconstruct timelines.

How do you reduce alert noise without missing real attacks?

Use baseline-aware thresholds, focus on high-signal events, and add pipeline health alerts so you know when detection is blind. Alerts should map to a clear runbook action.

When should we bring in external help?

If you need a defensible assessment, remediation plan, or incident-grade evidence handling: